Loading AI tools

Awareness of existence From Wikipedia, the free encyclopedia

Consciousness, at its simplest, is awareness of internal and external existence.[1] However, its nature has led to millennia of analyses, explanations, and debate by philosophers, scientists, and theologians. Opinions differ about what exactly needs to be studied or even considered consciousness. In some explanations, it is synonymous with the mind, and at other times, an aspect of it. In the past, it was one's "inner life", the world of introspection, of private thought, imagination, and volition.[2] Today, it often includes any kind of cognition, experience, feeling, or perception. It may be awareness, awareness of awareness, metacognition, or self-awareness, either continuously changing or not.[3][4] The disparate range of research, notions and speculations raises a curiosity about whether the right questions are being asked.[5]

Examples of the range of descriptions, definitions or explanations are: ordered distinction between self and environment, simple wakefulness, one's sense of selfhood or soul explored by "looking within"; being a metaphorical "stream" of contents, or being a mental state, mental event, or mental process of the brain.

The words "conscious" and "consciousness" in the English language date to the 17th century, and the first recorded use of "conscious" as a simple adjective was applied figuratively to inanimate objects ("the conscious Groves", 1643).[6]: 175 It derived from the Latin conscius (con- "together" and scio "to know") which meant "knowing with" or "having joint or common knowledge with another", especially as in sharing a secret.[7] Thomas Hobbes in Leviathan (1651) wrote: "Where two, or more men, know of one and the same fact, they are said to be Conscious of it one to another".[8] There were also many occurrences in Latin writings of the phrase conscius sibi, which translates literally as "knowing with oneself", or in other words "sharing knowledge with oneself about something". This phrase has the figurative sense of "knowing that one knows", which is something like the modern English word "conscious", but it was rendered into English as "conscious to oneself" or "conscious unto oneself". For example, Archbishop Ussher wrote in 1613 of "being so conscious unto myself of my great weakness".[9]

The Latin conscientia, literally 'knowledge-with', first appears in Roman juridical texts by writers such as Cicero. It means a kind of shared knowledge with moral value, specifically what a witness knows of someone else's deeds.[10][11] Although René Descartes (1596–1650), writing in Latin, is generally taken to be the first philosopher to use conscientia in a way less like the traditional meaning and more like the way modern English speakers would use "conscience", his meaning is nowhere defined.[12] In Search after Truth (Regulæ ad directionem ingenii ut et inquisitio veritatis per lumen naturale, Amsterdam 1701) he wrote the word with a gloss: conscientiâ, vel interno testimonio (translatable as "conscience, or internal testimony").[13][14] It might mean the knowledge of the value of one's own thoughts.[12]

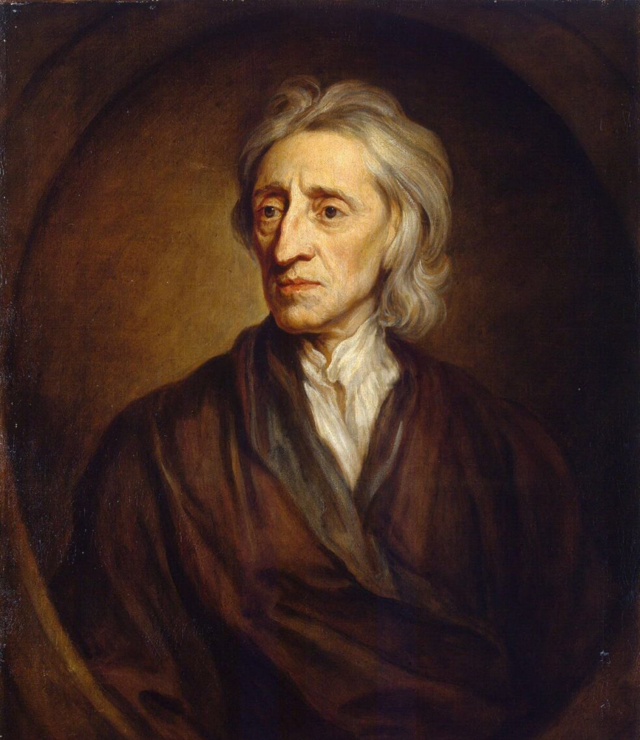

The origin of the modern concept of consciousness is often attributed to John Locke who defined the word in his Essay Concerning Human Understanding, published in 1690, as "the perception of what passes in a man's own mind".[15][16] The essay strongly influenced 18th-century British philosophy, and Locke's definition appeared in Samuel Johnson's celebrated Dictionary (1755).[17]

The French term conscience is defined roughly like English "consciousness" in the 1753 volume of Diderot and d'Alembert's Encyclopédie as "the opinion or internal feeling that we ourselves have from what we do".[18]

About forty meanings attributed to the term consciousness can be identified and categorized based on functions and experiences. The prospects for reaching any single, agreed-upon, theory-independent definition of consciousness appear remote.[19]

Scholars are divided as to whether Aristotle had a concept of consciousness. He does not use any single word or terminology that is clearly similar to the phenomenon or concept defined by John Locke. Victor Caston contends that Aristotle did have a concept more clearly similar to perception.[20]

Modern dictionary definitions of the word consciousness evolved over several centuries and reflect a range of seemingly related meanings, with some differences that have been controversial, such as the distinction between inward awareness and perception of the physical world, or the distinction between conscious and unconscious, or the notion of a mental entity or mental activity that is not physical.

The common-usage definitions of consciousness in Webster's Third New International Dictionary (1966) are as follows:

The Cambridge English Dictionary defines consciousness as "the state of understanding and realizing something".[21] The Oxford Living Dictionary defines consciousness as "[t]he state of being aware of and responsive to one's surroundings", "[a] person's awareness or perception of something", and "[t]he fact of awareness by the mind of itself and the world".[22]

Philosophers have attempted to clarify technical distinctions by using a jargon of their own. The corresponding entry in the Routledge Encyclopedia of Philosophy (1998) reads:

During the early 19th century, the emerging field of geology inspired a popular metaphor that the mind likewise had hidden layers "which recorded the past of the individual".[24]: 3 By 1875, most psychologists believed that "consciousness was but a small part of mental life",[24]: 3 and this idea underlies the goal of Freudian therapy, to expose the unconscious layer of the mind.

Other metaphors from various sciences inspired other analyses of the mind, for example: Johann Friedrich Herbart described ideas as being attracted and repulsed like magnets; John Stuart Mill developed the idea of "mental chemistry" and "mental compounds", and Edward B. Titchener sought the "structure" of the mind by analyzing its "elements". The abstract idea of states of consciousness mirrored the concept of states of matter.

In 1892, William James noted that the "ambiguous word 'content' has been recently invented instead of 'object'" and that the metaphor of mind as a container seemed to minimize the dualistic problem of how "states of consciousness can know" things, or objects;[25]: 465 by 1899 psychologists were busily studying the "contents of conscious experience by introspection and experiment".[26]: 365 Another popular metaphor was James's doctrine of the stream of consciousness, with continuity, fringes, and transitions.[25]: vii [lower-alpha 1]

James discussed the difficulties of describing and studying psychological phenomena, recognizing that commonly-used terminology was a necessary and acceptable starting point towards more precise, scientifically justified language. Prime examples were phrases like inner experience and personal consciousness:

The first and foremost concrete fact which every one will affirm to belong to his inner experience is the fact that consciousness of some sort goes on. 'States of mind' succeed each other in him. [...] But everyone knows what the terms mean [only] in a rough way; [...] When I say every 'state' or 'thought' is part of a personal consciousness, 'personal consciousness' is one of the terms in question. Its meaning we know so long as no one asks us to define it, but to give an accurate account of it is the most difficult of philosophic tasks. [...] The only states of consciousness that we naturally deal with are found in personal consciousnesses, minds, selves, concrete particular I's and you's. [25]: 152–153

Prior to the 20th century, philosophers treated the phenomenon of consciousness as the "inner world [of] one's own mind", and introspection was the mind "attending to" itself,[lower-alpha 2] an activity seemingly distinct from that of perceiving the 'outer world' and its physical phenomena. In 1892 William James noted the distinction along with doubts about the inward character of the mind:

'Things' have been doubted, but thoughts and feelings have never been doubted. The outer world, but never the inner world, has been denied. Everyone assumes that we have direct introspective acquaintance with our thinking activity as such, with our consciousness as something inward and contrasted with the outer objects which it knows. Yet I must confess that for my part I cannot feel sure of this conclusion. [...] It seems as if consciousness as an inner activity were rather a postulate than a sensibly given fact...[25]: 467

By the 1960s, for many philosophers and psychologists who talked about consciousness, the word no longer meant the 'inner world' but an indefinite, large category called awareness, as in the following example:

It is difficult for modern Western man to grasp that the Greeks really had no concept of consciousness in that they did not class together phenomena as varied as problem solving, remembering, imagining, perceiving, feeling pain, dreaming, and acting on the grounds that all these are manifestations of being aware or being conscious.[28]: 4

Many philosophers and scientists have been unhappy about the difficulty of producing a definition that does not involve circularity or fuzziness.[29] In The Macmillan Dictionary of Psychology (1989 edition), Stuart Sutherland emphasized external awareness, and expressed a skeptical attitude more than a definition:

Consciousness—The having of perceptions, thoughts, and feelings; awareness. The term is impossible to define except in terms that are unintelligible without a grasp of what consciousness means. Many fall into the trap of equating consciousness with self-consciousness—to be conscious it is only necessary to be aware of the external world. Consciousness is a fascinating but elusive phenomenon: it is impossible to specify what it is, what it does, or why it has evolved. Nothing worth reading has been written on it.[29]

Using 'awareness', however, as a definition or synonym of consciousness is not a simple matter:

If awareness of the environment . . . is the criterion of consciousness, then even the protozoans are conscious. If awareness of awareness is required, then it is doubtful whether the great apes and human infants are conscious.[26]

Many philosophers have argued that consciousness is a unitary concept that is understood by the majority of people despite the difficulty philosophers have had defining it.[30] Max Velmans proposed that the "everyday understanding of consciousness" uncontroversially "refers to experience itself rather than any particular thing that we observe or experience" and he added that consciousness "is [therefore] exemplified by all the things that we observe or experience",[31]: 4 whether thoughts, feelings, or perceptions. Velmans noted however, as of 2009, that there was a deep level of "confusion and internal division"[31] among experts about the phenomenon of consciousness, because researchers lacked "a sufficiently well-specified use of the term...to agree that they are investigating the same thing".[31]: 3 He argued additionally that "pre-existing theoretical commitments" to competing explanations of consciousness might be a source of bias.

Within the "modern consciousness studies" community the technical phrase 'phenomenal consciousness' is a common synonym for all forms of awareness, or simply 'experience',[31]: 4 without differentiating between inner and outer, or between higher and lower types. With advances in brain research, "the presence or absence of experienced phenomena"[31]: 3 of any kind underlies the work of those neuroscientists who seek "to analyze the precise relation of conscious phenomenology to its associated information processing" in the brain.[31]: 10 This neuroscientific goal is to find the "neural correlates of consciousness" (NCC). One criticism of this goal is that it begins with a theoretical commitment to the neurological origin of all "experienced phenomena" whether inner or outer.[lower-alpha 3] Also, the fact that the easiest 'content of consciousness' to be so analyzed is "the experienced three-dimensional world (the phenomenal world) beyond the body surface"[31]: 4 invites another criticism, that most consciousness research since the 1990s, perhaps because of bias, has focused on processes of external perception.[33]

From a history of psychology perspective, Julian Jaynes rejected popular but "superficial views of consciousness"[2]: 447 especially those which equate it with "that vaguest of terms, experience".[24]: 8 In 1976 he insisted that if not for introspection, which for decades had been ignored or taken for granted rather than explained, there could be no "conception of what consciousness is"[24]: 18 and in 1990, he reaffirmed the traditional idea of the phenomenon called 'consciousness', writing that "its denotative definition is, as it was for Descartes, Locke, and Hume, what is introspectable".[2]: 450 Jaynes saw consciousness as an important but small part of human mentality, and he asserted: "there can be no progress in the science of consciousness until ... what is introspectable [is] sharply distinguished"[2]: 447 from the unconscious processes of cognition such as perception, reactive awareness and attention, and automatic forms of learning, problem-solving, and decision-making.[24]: 21-47

The cognitive science point of view—with an inter-disciplinary perspective involving fields such as psychology, linguistics and anthropology[34]—requires no agreed definition of "consciousness" but studies the interaction of many processes besides perception. For some researchers, consciousness is linked to some kind of "selfhood", for example to certain pragmatic issues such as the feeling of agency and the effects of regret[33] and action on experience of one's own body or social identity.[35] Similarly Daniel Kahneman, who focused on systematic errors in perception, memory and decision-making, has differentiated between two kinds of mental processes, or cognitive "systems":[36] the "fast" activities that are primary, automatic and "cannot be turned off",[36]: 22 and the "slow", deliberate, effortful activities of a secondary system "often associated with the subjective experience of agency, choice, and concentration".[36]: 13 Kahneman's two systems have been described as "roughly corresponding to unconscious and conscious processes".[37]: 8 The two systems can interact, for example in sharing the control of attention.[36]: 22 While System 1 can be impulsive, "System 2 is in charge of self-control",[36]: 26 and "When we think of ourselves, we identify with System 2, the conscious, reasoning self that has beliefs, makes choices, and decides what to think about and what to do".[36]: 21

Some have argued that we should eliminate the concept from our understanding of the mind, a position known as consciousness semanticism.[38]

In medicine, a "level of consciousness" terminology is used to describe a patient's arousal and responsiveness, which can be seen as a continuum of states ranging from full alertness and comprehension, through disorientation, delirium, loss of meaningful communication, and finally loss of movement in response to painful stimuli.[39] Issues of practical concern include how the level of consciousness can be assessed in severely ill, comatose, or anesthetized people, and how to treat conditions in which consciousness is impaired or disrupted.[40] The degree or level of consciousness is measured by standardized behavior observation scales such as the Glasgow Coma Scale.

While historically philosophers have defended various views on consciousness, surveys indicate that physicalism is now the dominant position among contemporary philosophers of mind.[41] For an overview of the field, approaches often include both historical perspectives (e.g., Descartes, Locke, Kant) and organization by key issues in contemporary debates. An alternative is to focus primarily on current philosophical stances and empirical

Philosophers differ from non-philosophers in their intuitions about what consciousness is.[42] While most people have a strong intuition for the existence of what they refer to as consciousness,[30] skeptics argue that this intuition is too narrow, either because the concept of consciousness is embedded in our intuitions, or because we all are illusions. Gilbert Ryle, for example, argued that traditional understanding of consciousness depends on a Cartesian dualist outlook that improperly distinguishes between mind and body, or between mind and world. He proposed that we speak not of minds, bodies, and the world, but of entities, or identities, acting in the world. Thus, by speaking of "consciousness" we end up leading ourselves by thinking that there is any sort of thing as consciousness separated from behavioral and linguistic understandings.[43]

Ned Block argued that discussions on consciousness often failed to properly distinguish phenomenal (P-consciousness) from access (A-consciousness), though these terms had been used before Block.[44] P-consciousness, according to Block, is raw experience: it is moving, colored forms, sounds, sensations, emotions and feelings with our bodies and responses at the center. These experiences, considered independently of any impact on behavior, are called qualia. A-consciousness, on the other hand, is the phenomenon whereby information in our minds is accessible for verbal report, reasoning, and the control of behavior. So, when we perceive, information about what we perceive is access conscious; when we introspect, information about our thoughts is access conscious; when we remember, information about the past is access conscious, and so on. Although some philosophers, such as Daniel Dennett, have disputed the validity of this distinction,[45] others have broadly accepted it. David Chalmers has argued that A-consciousness can in principle be understood in mechanistic terms, but that understanding P-consciousness is much more challenging: he calls this the hard problem of consciousness.[46]

Some philosophers believe that Block's two types of consciousness are not the end of the story. William Lycan, for example, argued in his book Consciousness and Experience that at least eight clearly distinct types of consciousness can be identified (organism consciousness; control consciousness; consciousness of; state/event consciousness; reportability; introspective consciousness; subjective consciousness; self-consciousness)—and that even this list omits several more obscure forms.[47]

There is also debate over whether or not A-consciousness and P-consciousness always coexist or if they can exist separately. Although P-consciousness without A-consciousness is more widely accepted, there have been some hypothetical examples of A without P. Block, for instance, suggests the case of a "zombie" that is computationally identical to a person but without any subjectivity. However, he remains somewhat skeptical concluding "I don't know whether there are any actual cases of A-consciousness without P-consciousness, but I hope I have illustrated their conceptual possibility".[48]

Sam Harris observes: "At the level of your experience, you are not a body of cells, organelles, and atoms; you are consciousness and its ever-changing contents".[49] Seen in this way, consciousness is a subjectively experienced, ever-present field in which things (the contents of consciousness) come and go.

Christopher Tricker argues that this field of consciousness is symbolized by the mythical bird that opens the Daoist classic the Zhuangzi. This bird's name is Of a Flock (peng 鵬), yet its back is countless thousands of miles across and its wings are like clouds arcing across the heavens. "Like Of a Flock, whose wings arc across the heavens, the wings of your consciousness span to the horizon. At the same time, the wings of every other being's consciousness span to the horizon. You are of a flock, one bird among kin."[50]

Mental processes (such as consciousness) and physical processes (such as brain events) seem to be correlated, however the specific nature of the connection is unknown.

The first influential philosopher to discuss this question specifically was Descartes, and the answer he gave is known as mind–body dualism. Descartes proposed that consciousness resides within an immaterial domain he called res cogitans (the realm of thought), in contrast to the domain of material things, which he called res extensa (the realm of extension).[51] He suggested that the interaction between these two domains occurs inside the brain, perhaps in a small midline structure called the pineal gland.[52]

Although it is widely accepted that Descartes explained the problem cogently, few later philosophers have been happy with his solution, and his ideas about the pineal gland have especially been ridiculed.[53] However, no alternative solution has gained general acceptance. Proposed solutions can be divided broadly into two categories: dualist solutions that maintain Descartes's rigid distinction between the realm of consciousness and the realm of matter but give different answers for how the two realms relate to each other; and monist solutions that maintain that there is really only one realm of being, of which consciousness and matter are both aspects. Each of these categories itself contains numerous variants. The two main types of dualism are substance dualism (which holds that the mind is formed of a distinct type of substance not governed by the laws of physics), and property dualism (which holds that the laws of physics are universally valid but cannot be used to explain the mind). The three main types of monism are physicalism (which holds that the mind consists of matter organized in a particular way), idealism (which holds that only thought or experience truly exists, and matter is merely an illusion), and neutral monism (which holds that both mind and matter are aspects of a distinct essence that is itself identical to neither of them). There are also, however, a large number of idiosyncratic theories that cannot cleanly be assigned to any of these schools of thought.[54]

Since the dawn of Newtonian science with its vision of simple mechanical principles governing the entire universe, some philosophers have been tempted by the idea that consciousness could be explained in purely physical terms. The first influential writer to propose such an idea explicitly was Julien Offray de La Mettrie, in his book Man a Machine (L'homme machine). His arguments, however, were very abstract.[55] The most influential modern physical theories of consciousness are based on psychology and neuroscience. Theories proposed by neuroscientists such as Gerald Edelman[56] and Antonio Damasio,[57] and by philosophers such as Daniel Dennett,[58] seek to explain consciousness in terms of neural events occurring within the brain. Many other neuroscientists, such as Christof Koch,[59] have explored the neural basis of consciousness without attempting to frame all-encompassing global theories. At the same time, computer scientists working in the field of artificial intelligence have pursued the goal of creating digital computer programs that can simulate or embody consciousness.[60]

A few theoretical physicists have argued that classical physics is intrinsically incapable of explaining the holistic aspects of consciousness, but that quantum theory may provide the missing ingredients. Several theorists have therefore proposed quantum mind (QM) theories of consciousness.[61] Notable theories falling into this category include the holonomic brain theory of Karl Pribram and David Bohm, and the Orch-OR theory formulated by Stuart Hameroff and Roger Penrose. Some of these QM theories offer descriptions of phenomenal consciousness, as well as QM interpretations of access consciousness. None of the quantum mechanical theories have been confirmed by experiment. Recent publications by G. Guerreshi, J. Cia, S. Popescu, and H. Briegel[62] could falsify proposals such as those of Hameroff, which rely on quantum entanglement in protein. At the present time many scientists and philosophers consider the arguments for an important role of quantum phenomena to be unconvincing.[63] Empirical evidence is against the notion of quantum consciousness, an experiment about wave function collapse led by Catalina Curceanu in 2022 suggests that quantum consciousness, as suggested by Roger Penrose and Stuart Hameroff, is highly implausible.[64]

Apart from the general question of the "hard problem" of consciousness (which is, roughly speaking, the question of how mental experience can arise from a physical basis[65]), a more specialized question is how to square the subjective notion that we are in control of our decisions (at least in some small measure) with the customary view of causality that subsequent events are caused by prior events. The topic of free will is the philosophical and scientific examination of this conundrum.

Many philosophers consider experience to be the essence of consciousness, and believe that experience can only fully be known from the inside, subjectively. The problem of other minds is a philosophical problem traditionally stated as the following epistemological question: Given that I can only observe the behavior of others, how can I know that others have minds?[66] The problem of other minds is particularly acute for people who believe in the possibility of philosophical zombies, that is, people who think it is possible in principle to have an entity that is physically indistinguishable from a human being and behaves like a human being in every way but nevertheless lacks consciousness.[67] Related issues have also been studied extensively by Greg Littmann of the University of Illinois,[68] and by Colin Allen (a professor at the University of Pittsburgh) regarding the literature and research studying artificial intelligence in androids.[69]

The most commonly given answer is that we attribute consciousness to other people because we see that they resemble us in appearance and behavior; we reason that if they look like us and act like us, they must be like us in other ways, including having experiences of the sort that we do.[70] There are, however, a variety of problems with that explanation. For one thing, it seems to violate the principle of parsimony, by postulating an invisible entity that is not necessary to explain what we observe.[70] Some philosophers, such as Daniel Dennett in a research paper titled "The Unimagined Preposterousness of Zombies", argue that people who give this explanation do not really understand what they are saying.[71] More broadly, philosophers who do not accept the possibility of zombies generally believe that consciousness is reflected in behavior (including verbal behavior), and that we attribute consciousness on the basis of behavior. A more straightforward way of saying this is that we attribute experiences to people because of what they can do, including the fact that they can tell us about their experiences.[72]

The term "qualia" was introduced in philosophical literature by C. I. Lewis. The word is derived from Latin and means "of what sort". It is basically a quantity or property of something as perceived or experienced by an individual, like the scent of rose, the taste of wine, or the pain of a headache. They are difficult to articulate or describe. The philosopher and scientist Daniel Dennett describes them as "the way things seem to us", while philosopher and cognitive scientist David Chalmers expanded on qualia as the "hard problem of consciousness" in the 1990s. When qualia is experienced, activity is simulated in the brain, and these processes are called neural correlates of consciousness (NCCs). Many scientific studies have been done to attempt to link particular brain regions with emotions or experiences.[73][74][75]

Species which experience qualia are said to have sentience, which is central to the animal rights movement, because it includes the ability to experience pain and suffering.[73]

For many decades, consciousness as a research topic was avoided by the majority of mainstream scientists, because of a general feeling that a phenomenon defined in subjective terms could not properly be studied using objective experimental methods.[76] In 1975 George Mandler published an influential psychological study which distinguished between slow, serial, and limited conscious processes and fast, parallel and extensive unconscious ones.[77] The Science and Religion Forum[78] 1984 annual conference, 'From Artificial Intelligence to Human Consciousness' identified the nature of consciousness as a matter for investigation; Donald Michie was a keynote speaker. Starting in the 1980s, an expanding community of neuroscientists and psychologists have associated themselves with a field called Consciousness Studies, giving rise to a stream of experimental work published in books,[79] journals such as Consciousness and Cognition, Frontiers in Consciousness Research, Psyche, and the Journal of Consciousness Studies, along with regular conferences organized by groups such as the Association for the Scientific Study of Consciousness[80] and the Society for Consciousness Studies.

Modern medical and psychological investigations into consciousness are based on psychological experiments (including, for example, the investigation of priming effects using subliminal stimuli),[81] and on case studies of alterations in consciousness produced by trauma, illness, or drugs. Broadly viewed, scientific approaches are based on two core concepts. The first identifies the content of consciousness with the experiences that are reported by human subjects; the second makes use of the concept of consciousness that has been developed by neurologists and other medical professionals who deal with patients whose behavior is impaired. In either case, the ultimate goals are to develop techniques for assessing consciousness objectively in humans as well as other animals, and to understand the neural and psychological mechanisms that underlie it.[59]

Experimental research on consciousness presents special difficulties, due to the lack of a universally accepted operational definition. In the majority of experiments that are specifically about consciousness, the subjects are human, and the criterion used is verbal report: in other words, subjects are asked to describe their experiences, and their descriptions are treated as observations of the contents of consciousness.[82]

For example, subjects who stare continuously at a Necker cube usually report that they experience it "flipping" between two 3D configurations, even though the stimulus itself remains the same.[83] The objective is to understand the relationship between the conscious awareness of stimuli (as indicated by verbal report) and the effects the stimuli have on brain activity and behavior. In several paradigms, such as the technique of response priming, the behavior of subjects is clearly influenced by stimuli for which they report no awareness, and suitable experimental manipulations can lead to increasing priming effects despite decreasing prime identification (double dissociation).[84]

Verbal report is widely considered to be the most reliable indicator of consciousness, but it raises a number of issues.[85] For one thing, if verbal reports are treated as observations, akin to observations in other branches of science, then the possibility arises that they may contain errors—but it is difficult to make sense of the idea that subjects could be wrong about their own experiences, and even more difficult to see how such an error could be detected.[86] Daniel Dennett has argued for an approach he calls heterophenomenology, which means treating verbal reports as stories that may or may not be true, but his ideas about how to do this have not been widely adopted.[87] Another issue with verbal report as a criterion is that it restricts the field of study to humans who have language: this approach cannot be used to study consciousness in other species, pre-linguistic children, or people with types of brain damage that impair language. As a third issue, philosophers who dispute the validity of the Turing test may feel that it is possible, at least in principle, for verbal report to be dissociated from consciousness entirely: a philosophical zombie may give detailed verbal reports of awareness in the absence of any genuine awareness.[88]

Although verbal report is in practice the "gold standard" for ascribing consciousness, it is not the only possible criterion.[85] In medicine, consciousness is assessed as a combination of verbal behavior, arousal, brain activity, and purposeful movement. The last three of these can be used as indicators of consciousness when verbal behavior is absent.[89][90] The scientific literature regarding the neural bases of arousal and purposeful movement is very extensive. Their reliability as indicators of consciousness is disputed, however, due to numerous studies showing that alert human subjects can be induced to behave purposefully in a variety of ways in spite of reporting a complete lack of awareness.[84] Studies related to the neuroscience of free will have also shown that the influence consciousness has on decision-making is not always straightforward.[91]

Another approach applies specifically to the study of self-awareness, that is, the ability to distinguish oneself from others. In the 1970s Gordon Gallup developed an operational test for self-awareness, known as the mirror test. The test examines whether animals are able to differentiate between seeing themselves in a mirror versus seeing other animals. The classic example involves placing a spot of coloring on the skin or fur near the individual's forehead and seeing if they attempt to remove it or at least touch the spot, thus indicating that they recognize that the individual they are seeing in the mirror is themselves.[92] Humans (older than 18 months) and other great apes, bottlenose dolphins, orcas, pigeons, European magpies and elephants have all been observed to pass this test.[93] While some other animals like pigs have been shown to find food by looking into the mirror.[94]

Contingency awareness is another such approach, which is basically the conscious understanding of one's actions and its effects on one's environment.[95] It is recognized as a factor in self-recognition. The brain processes during contingency awareness and learning is believed to rely on an intact medial temporal lobe and age. A study done in 2020 involving transcranial direct current stimulation, Magnetic resonance imaging (MRI) and eyeblink classical conditioning supported the idea that the parietal cortex serves as a substrate for contingency awareness and that age-related disruption of this region is sufficient to impair awareness.[96]

A major part of the scientific literature on consciousness consists of studies that examine the relationship between the experiences reported by subjects and the activity that simultaneously takes place in their brains—that is, studies of the neural correlates of consciousness. The hope is to find that activity in a particular part of the brain, or a particular pattern of global brain activity, which will be strongly predictive of conscious awareness. Several brain imaging techniques, such as EEG and fMRI, have been used for physical measures of brain activity in these studies.[97]

Another idea that has drawn attention for several decades is that consciousness is associated with high-frequency (gamma band) oscillations in brain activity. This idea arose from proposals in the 1980s, by Christof von der Malsburg and Wolf Singer, that gamma oscillations could solve the so-called binding problem, by linking information represented in different parts of the brain into a unified experience.[98] Rodolfo Llinás, for example, proposed that consciousness results from recurrent thalamo-cortical resonance where the specific thalamocortical systems (content) and the non-specific (centromedial thalamus) thalamocortical systems (context) interact in the gamma band frequency via synchronous oscillations.[99]

A number of studies have shown that activity in primary sensory areas of the brain is not sufficient to produce consciousness: it is possible for subjects to report a lack of awareness even when areas such as the primary visual cortex (V1) show clear electrical responses to a stimulus.[100] Higher brain areas are seen as more promising, especially the prefrontal cortex, which is involved in a range of higher cognitive functions collectively known as executive functions.[101] There is substantial evidence that a "top-down" flow of neural activity (i.e., activity propagating from the frontal cortex to sensory areas) is more predictive of conscious awareness than a "bottom-up" flow of activity.[102] The prefrontal cortex is not the only candidate area, however: studies by Nikos Logothetis and his colleagues have shown, for example, that visually responsive neurons in parts of the temporal lobe reflect the visual perception in the situation when conflicting visual images are presented to different eyes (i.e., bistable percepts during binocular rivalry).[103] Furthermore, top-down feedback from higher to lower visual brain areas may be weaker or absent in the peripheral visual field, as suggested by some experimental data and theoretical arguments;[104] nevertheless humans can perceive visual inputs in the peripheral visual field arising from bottom-up V1 neural activities.[104][105] Meanwhile, bottom-up V1 activities for the central visual fields can be vetoed, and thus made invisible to perception, by the top-down feedback, when these bottom-up signals are inconsistent with the brain's internal model of the visual world.[104][105]

Modulation of neural responses may correlate with phenomenal experiences. In contrast to the raw electrical responses that do not correlate with consciousness, the modulation of these responses by other stimuli correlates surprisingly well with an important aspect of consciousness: namely with the phenomenal experience of stimulus intensity (brightness, contrast). In the research group of Danko Nikolić it has been shown that some of the changes in the subjectively perceived brightness correlated with the modulation of firing rates while others correlated with the modulation of neural synchrony.[106] An fMRI investigation suggested that these findings were strictly limited to the primary visual areas.[107] This indicates that, in the primary visual areas, changes in firing rates and synchrony can be considered as neural correlates of qualia—at least for some type of qualia.

In 2013, the perturbational complexity index (PCI) was proposed, a measure of the algorithmic complexity of the electrophysiological response of the cortex to transcranial magnetic stimulation. This measure was shown to be higher in individuals that are awake, in REM sleep or in a locked-in state than in those who are in deep sleep or in a vegetative state,[108] making it potentially useful as a quantitative assessment of consciousness states.

Assuming that not only humans but even some non-mammalian species are conscious, a number of evolutionary approaches to the problem of neural correlates of consciousness open up. For example, assuming that birds are conscious—a common assumption among neuroscientists and ethologists due to the extensive cognitive repertoire of birds—there are comparative neuroanatomical ways to validate some of the principal, currently competing, mammalian consciousness–brain theories. The rationale for such a comparative study is that the avian brain deviates structurally from the mammalian brain. So how similar are they? What homologs can be identified? The general conclusion from the study by Butler, et al.[109] is that some of the major theories for the mammalian brain[110][111][112] also appear to be valid for the avian brain. The structures assumed to be critical for consciousness in mammalian brains have homologous counterparts in avian brains. Thus the main portions of the theories of Crick and Koch,[110] Edelman and Tononi,[111] and Cotterill [112] seem to be compatible with the assumption that birds are conscious. Edelman also differentiates between what he calls primary consciousness (which is a trait shared by humans and non-human animals) and higher-order consciousness as it appears in humans alone along with human language capacity.[111] Certain aspects of the three theories, however, seem less easy to apply to the hypothesis of avian consciousness. For instance, the suggestion by Crick and Koch that layer 5 neurons of the mammalian brain have a special role, seems difficult to apply to the avian brain, since the avian homologs have a different morphology. Likewise, the theory of Eccles[113][114] seems incompatible, since a structural homolog/analogue to the dendron has not been found in avian brains. The assumption of an avian consciousness also brings the reptilian brain into focus. The reason is the structural continuity between avian and reptilian brains, meaning that the phylogenetic origin of consciousness may be earlier than suggested by many leading neuroscientists.

Joaquin Fuster of UCLA has advocated the position of the importance of the prefrontal cortex in humans, along with the areas of Wernicke and Broca, as being of particular importance to the development of human language capacities neuro-anatomically necessary for the emergence of higher-order consciousness in humans.[115]

A study in 2016 looked at lesions in specific areas of the brainstem that were associated with coma and vegetative states. A small region of the rostral dorsolateral pontine tegmentum in the brainstem was suggested to drive consciousness through functional connectivity with two cortical regions, the left ventral anterior insular cortex, and the pregenual anterior cingulate cortex. These three regions may work together as a triad to maintain consciousness.[116]

A wide range of empirical theories of consciousness have been proposed.[117][118][119] Adrian Doerig and colleagues list 13 notable theories,[119] while Anil Seth and Tim Bayne list 22 notable theories.[118]

Global workspace theory (GWT) is a cognitive architecture and theory of consciousness proposed by the cognitive psychologist Bernard Baars in 1988. Baars explains the theory with the metaphor of a theater, with conscious processes represented by an illuminated stage. This theater integrates inputs from a variety of unconscious and otherwise autonomous networks in the brain and then broadcasts them to unconscious networks (represented in the metaphor by a broad, unlit "audience"). The theory has since been expanded upon by other scientists including cognitive neuroscientist Stanislas Dehaene and Lionel Naccache.[120][121]

Integrated information theory (IIT), pioneered by neuroscientist Giulio Tononi in 2004, postulates that consciousness resides in the information being processed and arises once the information reaches a certain level of complexity. Additionally, IIT is one of the only leading theories of consciousness that attempts to create a 1:1 mapping between conscious states and precise, formal mathematical descriptions of those mental states. Proponents of this model suggest that it may provide a physical grounding for consciousness in neurons, as they provide the mechanism by which information is integrated. This also relates to the "hard problem of consciousness" proposed by David Chalmers. The theory remains controversial, because of its lack of credibility.[clarification needed][122][123][73]

Orchestrated objective reduction (Orch-OR), or the quantum theory of mind, was proposed by scientists Roger Penrose and Stuart Hameroff, and states that consciousness originates at the quantum level inside neurons. The mechanism is held to be a quantum process called objective reduction that is orchestrated by cellular structures called microtubules, which form the cytoskeleton around which the brain is built. The duo proposed that these quantum processes accounted for creativity, innovation, and problem-solving abilities. Penrose published his views in the book The Emperor's New Mind. In 2014, the discovery of quantum vibrations inside microtubules gave new life to the argument.[73]

In 2011, Graziano and Kastner[124] proposed the "attention schema" theory of awareness. In that theory, specific cortical areas, notably in the superior temporal sulcus and the temporo-parietal junction, are used to build the construct of awareness and attribute it to other people. The same cortical machinery is also used to attribute awareness to oneself. Damage to these cortical regions can lead to deficits in consciousness such as hemispatial neglect. In the attention schema theory, the value of explaining the feature of awareness and attributing it to a person is to gain a useful predictive model of that person's attentional processing. Attention is a style of information processing in which a brain focuses its resources on a limited set of interrelated signals. Awareness, in this theory, is a useful, simplified schema that represents attentional states. To be aware of X is explained by constructing a model of one's attentional focus on X.

The entropic brain is a theory of conscious states informed by neuroimaging research with psychedelic drugs. The theory suggests that the brain in primary states such as rapid eye movement (REM) sleep, early psychosis and under the influence of psychedelic drugs, is in a disordered state; normal waking consciousness constrains some of this freedom and makes possible metacognitive functions such as internal self-administered reality testing and self-awareness.[125][126][127][128] Criticism has included questioning whether the theory has been adequately tested.[129]

In 2017, work by David Rudrauf and colleagues, including Karl Friston, applied the active inference paradigm to consciousness, leading to the projective consciousness model (PCM), a model of how sensory data is integrated with priors in a process of projective transformation. The authors argue that, while their model identifies a key relationship between computation and phenomenology, it does not completely solve the hard problem of consciousness or completely close the explanatory gap.[130]

In 2004, a proposal was made by molecular biologist Francis Crick (co-discoverer of the double helix), which stated that to bind together an individual's experience, a conductor of an orchestra is required. Together with neuroscientist Christof Koch, he proposed that this conductor would have to collate information rapidly from various regions of the brain. The duo reckoned that the claustrum was well suited for the task. However, Crick died while working on the idea.[73]

The proposal is backed by a study done in 2014, where a team at the George Washington University induced unconsciousness in a 54-year-old woman suffering from intractable epilepsy by simulating her claustrum. The woman underwent depth electrode implantation and electrical stimulation mapping. The electrode between the left claustrum and anterior-dorsal insula was the one which induced unconsciousness. Correlation for interactions affecting medial parietal and posterior frontal channels during stimulation increased significantly as well. Their findings suggested that the left claustrum or anterior insula is an important part of a network that subserves consciousness, and that disruption of consciousness is related to increased EEG signal synchrony within frontal-parietal networks. However, this remains an isolated, hence inconclusive study.[73][131]

The emergence of consciousness during biological evolution remains a topic of ongoing scientific inquiry. The survival value of consciousness is still a matter of exploration and understanding. While consciousness appears to play a crucial role in human cognition, decision-making, and self-awareness, its adaptive significance across different species remains a subject of debate.

Some people question whether consciousness has any survival value. Some argue that consciousness is a by-product of evolution. Thomas Henry Huxley for example defends in an essay titled "On the Hypothesis that Animals are Automata, and its History" an epiphenomenalist theory of consciousness, according to which consciousness is a causally inert effect of neural activity—"as the steam-whistle which accompanies the work of a locomotive engine is without influence upon its machinery".[132] To this William James objects in his essay Are We Automata? by stating an evolutionary argument for mind-brain interaction implying that if the preservation and development of consciousness in the biological evolution is a result of natural selection, it is plausible that consciousness has not only been influenced by neural processes, but has had a survival value itself; and it could only have had this if it had been efficacious.[133][134] Karl Popper develops a similar evolutionary argument in the book The Self and Its Brain.[135]

Opinions are divided on when and how consciousness first arose. It has been argued that consciousness emerged (i) exclusively with the first humans, (ii) exclusively with the first mammals, (iii) independently in mammals and birds, or (iv) with the first reptiles.[136] Other authors date the origins of consciousness to the first animals with nervous systems or early vertebrates in the Cambrian over 500 million years ago.[137] Donald Griffin suggests in his book Animal Minds a gradual evolution of consciousness.[138]

Regarding the primary function of conscious processing, a recurring idea in recent theories is that phenomenal states somehow integrate neural activities and information-processing that would otherwise be independent.[139] This has been called the integration consensus. Another example has been proposed by Gerald Edelman called dynamic core hypothesis which puts emphasis on reentrant connections that reciprocally link areas of the brain in a massively parallel manner.[140] Edelman also stresses the importance of the evolutionary emergence of higher-order consciousness in humans from the historically older trait of primary consciousness which humans share with non-human animals (see Neural correlates section above). These theories of integrative function present solutions to two classic problems associated with consciousness: differentiation and unity. They show how our conscious experience can discriminate between a virtually unlimited number of different possible scenes and details (differentiation) because it integrates those details from our sensory systems, while the integrative nature of consciousness in this view easily explains how our experience can seem unified as one whole despite all of these individual parts. However, it remains unspecified which kinds of information are integrated in a conscious manner and which kinds can be integrated without consciousness. Nor is it explained what specific causal role conscious integration plays, nor why the same functionality cannot be achieved without consciousness. Obviously not all kinds of information are capable of being disseminated consciously (e.g., neural activity related to vegetative functions, reflexes, unconscious motor programs, low-level perceptual analyzes, etc.) and many kinds of information can be disseminated and combined with other kinds without consciousness, as in intersensory interactions such as the ventriloquism effect.[141] Hence it remains unclear why any of it is conscious. For a review of the differences between conscious and unconscious integrations, see the article of Ezequiel Morsella.[141]

As noted earlier, even among writers who consider consciousness to be well-defined, there is widespread dispute about which animals other than humans can be said to possess it.[142] Edelman has described this distinction as that of humans possessing higher-order consciousness while sharing the trait of primary consciousness with non-human animals (see previous paragraph). Thus, any examination of the evolution of consciousness is faced with great difficulties. Nevertheless, some writers have argued that consciousness can be viewed from the standpoint of evolutionary biology as an adaptation in the sense of a trait that increases fitness.[143] In his article "Evolution of consciousness", John Eccles argued that special anatomical and physical properties of the mammalian cerebral cortex gave rise to consciousness ("[a] psychon ... linked to [a] dendron through quantum physics").[144] Bernard Baars proposed that once in place, this "recursive" circuitry may have provided a basis for the subsequent development of many of the functions that consciousness facilitates in higher organisms.[145] Peter Carruthers has put forth one such potential adaptive advantage gained by conscious creatures by suggesting that consciousness allows an individual to make distinctions between appearance and reality.[146] This ability would enable a creature to recognize the likelihood that their perceptions are deceiving them (e.g. that water in the distance may be a mirage) and behave accordingly, and it could also facilitate the manipulation of others by recognizing how things appear to them for both cooperative and devious ends.

Other philosophers, however, have suggested that consciousness would not be necessary for any functional advantage in evolutionary processes.[147][148] No one has given a causal explanation, they argue, of why it would not be possible for a functionally equivalent non-conscious organism (i.e., a philosophical zombie) to achieve the very same survival advantages as a conscious organism. If evolutionary processes are blind to the difference between function F being performed by conscious organism O and non-conscious organism O*, it is unclear what adaptive advantage consciousness could provide.[149] As a result, an exaptive explanation of consciousness has gained favor with some theorists that posit consciousness did not evolve as an adaptation but was an exaptation arising as a consequence of other developments such as increases in brain size or cortical rearrangement.[137] Consciousness in this sense has been compared to the blind spot in the retina where it is not an adaption of the retina, but instead just a by-product of the way the retinal axons were wired.[150] Several scholars including Pinker, Chomsky, Edelman, and Luria have indicated the importance of the emergence of human language as an important regulative mechanism of learning and memory in the context of the development of higher-order consciousness (see Neural correlates section above).

There are some brain states in which consciousness seems to be absent, including dreamless sleep or coma. There are also a variety of circumstances that can change the relationship between the mind and the world in less drastic ways, producing what are known as altered states of consciousness. Some altered states occur naturally; others can be produced by drugs or brain damage.[151] Altered states can be accompanied by changes in thinking, disturbances in the sense of time, feelings of loss of control, changes in emotional expression, alternations in body image and changes in meaning or significance.[152]

The two most widely accepted altered states are sleep and dreaming. Although dream sleep and non-dream sleep appear very similar to an outside observer, each is associated with a distinct pattern of brain activity, metabolic activity, and eye movement; each is also associated with a distinct pattern of experience and cognition. During ordinary non-dream sleep, people who are awakened report only vague and sketchy thoughts, and their experiences do not cohere into a continuous narrative. During dream sleep, in contrast, people who are awakened report rich and detailed experiences in which events form a continuous progression, which may however be interrupted by bizarre or fantastic intrusions.[153][failed verification] Thought processes during the dream state frequently show a high level of irrationality. Both dream and non-dream states are associated with severe disruption of memory: it usually disappears in seconds during the non-dream state, and in minutes after awakening from a dream unless actively refreshed.[154]

Research conducted on the effects of partial epileptic seizures on consciousness found that patients who have partial epileptic seizures experience altered states of consciousness.[155][156] In partial epileptic seizures, consciousness is impaired or lost while some aspects of consciousness, often automated behaviors, remain intact. Studies found that when measuring the qualitative features during partial epileptic seizures, patients exhibited an increase in arousal and became absorbed in the experience of the seizure, followed by difficulty in focusing and shifting attention.

A variety of psychoactive drugs, including alcohol, have notable effects on consciousness.[157] These range from a simple dulling of awareness produced by sedatives, to increases in the intensity of sensory qualities produced by stimulants, cannabis, empathogens–entactogens such as MDMA ("Ecstasy"), or most notably by the class of drugs known as psychedelics.[151] LSD, mescaline, psilocybin, dimethyltryptamine, and others in this group can produce major distortions of perception, including hallucinations; some users even describe their drug-induced experiences as mystical or spiritual in quality. The brain mechanisms underlying these effects are not as well understood as those induced by use of alcohol,[157] but there is substantial evidence that alterations in the brain system that uses the chemical neurotransmitter serotonin play an essential role.[158]

There has been some research into physiological changes in yogis and people who practise various techniques of meditation. Some research with brain waves during meditation has reported differences between those corresponding to ordinary relaxation and those corresponding to meditation. It has been disputed, however, whether there is enough evidence to count these as physiologically distinct states of consciousness.[159]

The most extensive study of the characteristics of altered states of consciousness was made by psychologist Charles Tart in the 1960s and 1970s. Tart analyzed a state of consciousness as made up of a number of component processes, including exteroception (sensing the external world); interoception (sensing the body); input-processing (seeing meaning); emotions; memory; time sense; sense of identity; evaluation and cognitive processing; motor output; and interaction with the environment.[160][self-published source] Each of these, in his view, could be altered in multiple ways by drugs or other manipulations. The components that Tart identified have not, however, been validated by empirical studies. Research in this area has not yet reached firm conclusions, but a recent questionnaire-based study identified eleven significant factors contributing to drug-induced states of consciousness: experience of unity; spiritual experience; blissful state; insightfulness; disembodiment; impaired control and cognition; anxiety; complex imagery; elementary imagery; audio-visual synesthesia; and changed meaning of percepts.[161]

The medical approach to consciousness is scientifically oriented. It derives from a need to treat people whose brain function has been impaired as a result of disease, brain damage, toxins, or drugs. In medicine, conceptual distinctions are considered useful to the degree that they can help to guide treatments. The medical approach focuses mostly on the amount of consciousness a person has: in medicine, consciousness is assessed as a "level" ranging from coma and brain death at the low end, to full alertness and purposeful responsiveness at the high end.[162]

Consciousness is of concern to patients and physicians, especially neurologists and anesthesiologists. Patients may have disorders of consciousness or may need to be anesthetized for a surgical procedure. Physicians may perform consciousness-related interventions such as instructing the patient to sleep, administering general anesthesia, or inducing medical coma.[162] Also, bioethicists may be concerned with the ethical implications of consciousness in medical cases of patients such as the Karen Ann Quinlan case,[163] while neuroscientists may study patients with impaired consciousness in hopes of gaining information about how the brain works.[164]

In medicine, consciousness is examined using a set of procedures known as neuropsychological assessment.[89] There are two commonly used methods for assessing the level of consciousness of a patient: a simple procedure that requires minimal training, and a more complex procedure that requires substantial expertise. The simple procedure begins by asking whether the patient is able to move and react to physical stimuli. If so, the next question is whether the patient can respond in a meaningful way to questions and commands. If so, the patient is asked for name, current location, and current day and time. A patient who can answer all of these questions is said to be "alert and oriented times four" (sometimes denoted "A&Ox4" on a medical chart), and is usually considered fully conscious.[165]

The more complex procedure is known as a neurological examination, and is usually carried out by a neurologist in a hospital setting. A formal neurological examination runs through a precisely delineated series of tests, beginning with tests for basic sensorimotor reflexes, and culminating with tests for sophisticated use of language. The outcome may be summarized using the Glasgow Coma Scale, which yields a number in the range 3–15, with a score of 3 to 8 indicating coma, and 15 indicating full consciousness. The Glasgow Coma Scale has three subscales, measuring the best motor response (ranging from "no motor response" to "obeys commands"), the best eye response (ranging from "no eye opening" to "eyes opening spontaneously") and the best verbal response (ranging from "no verbal response" to "fully oriented"). There is also a simpler pediatric version of the scale, for children too young to be able to use language.[162]

In 2013, an experimental procedure was developed to measure degrees of consciousness, the procedure involving stimulating the brain with a magnetic pulse, measuring resulting waves of electrical activity, and developing a consciousness score based on the complexity of the brain activity.[166]

Medical conditions that inhibit consciousness are considered disorders of consciousness.[167] This category generally includes minimally conscious state and persistent vegetative state, but sometimes also includes the less severe locked-in syndrome and more severe chronic coma.[167][168] Differential diagnosis of these disorders is an active area of biomedical research.[169][170][171] Finally, brain death results in possible irreversible disruption of consciousness.[167] While other conditions may cause a moderate deterioration (e.g., dementia and delirium) or transient interruption (e.g., grand mal and petit mal seizures) of consciousness, they are not included in this category.

| Disorder | Description |

|---|---|

| Locked-in syndrome | The patient has awareness, sleep-wake cycles, and meaningful behavior (viz., eye-movement), but is isolated due to quadriplegia and pseudobulbar palsy. |

| Minimally conscious state | The patient has intermittent periods of awareness and wakefulness and displays some meaningful behavior. |

| Persistent vegetative state | The patient has sleep-wake cycles, but lacks awareness and only displays reflexive and non-purposeful behavior. |

| Chronic coma | The patient lacks awareness and sleep-wake cycles and only displays reflexive behavior. |

| Brain death | The patient lacks awareness, sleep-wake cycles, and brain-mediated reflexive behavior. |

Medical experts increasingly view anosognosia as a disorder of consciousness.[172] Anosognosia is a Greek-derived term meaning "unawareness of disease". This is a condition in which patients are disabled in some way, most commonly as a result of a stroke, but either misunderstand the nature of the problem or deny that there is anything wrong with them.[173] The most frequently occurring form is seen in people who have experienced a stroke damaging the parietal lobe in the right hemisphere of the brain, giving rise to a syndrome known as hemispatial neglect, characterized by an inability to direct action or attention toward objects located to the left with respect to their bodies. Patients with hemispatial neglect are often paralyzed on the left side of the body, but sometimes deny being unable to move. When questioned about the obvious problem, the patient may avoid giving a direct answer, or may give an explanation that does not make sense. Patients with hemispatial neglect may also fail to recognize paralyzed parts of their bodies: one frequently mentioned case is of a man who repeatedly tried to throw his own paralyzed right leg out of the bed he was lying in, and when asked what he was doing, complained that somebody had put a dead leg into the bed with him. An even more striking type of anosognosia is Anton–Babinski syndrome, a rarely occurring condition in which patients become blind but claim to be able to see normally, and persist in this claim in spite of all evidence to the contrary.[174]

Of the eight types of consciousness in the Lycan classification, some are detectable in utero and others develop years after birth. Psychologist and educator William Foulkes studied children's dreams and concluded that prior to the shift in cognitive maturation that humans experience during ages five to seven,[175] children lack the Lockean consciousness that Lycan had labeled "introspective consciousness" and that Foulkes labels "self-reflection".[176] In a 2020 paper, Katherine Nelson and Robyn Fivush use "autobiographical consciousness" to label essentially the same faculty, and agree with Foulkes on the timing of this faculty's acquisition. Nelson and Fivush contend that "language is the tool by which humans create a new, uniquely human form of consciousness, namely, autobiographical consciousness".[177] Julian Jaynes had staked out these positions decades earlier.[178][179] Citing the developmental steps that lead the infant to autobiographical consciousness, Nelson and Fivush point to the acquisition of "theory of mind", calling theory of mind "necessary for autobiographical consciousness" and defining it as "understanding differences between one's own mind and others' minds in terms of beliefs, desires, emotions and thoughts". They write, "The hallmark of theory of mind, the understanding of false belief, occurs ... at five to six years of age".[180]

The topic of animal consciousness is beset by a number of difficulties. It poses the problem of other minds in an especially severe form, because non-human animals, lacking the ability to express human language, cannot tell humans about their experiences.[181] Also, it is difficult to reason objectively about the question, because a denial that an animal is conscious is often taken to imply that it does not feel, its life has no value, and that harming it is not morally wrong. Descartes, for example, has sometimes been blamed for mistreatment of animals due to the fact that he believed only humans have a non-physical mind.[182] Most people have a strong intuition that some animals, such as cats and dogs, are conscious, while others, such as insects, are not; but the sources of this intuition are not obvious, and are often based on personal interactions with pets and other animals they have observed.[181]

Philosophers who consider subjective experience the essence of consciousness also generally believe, as a correlate, that the existence and nature of animal consciousness can never rigorously be known. Thomas Nagel spelled out this point of view in an influential essay titled "What Is it Like to Be a Bat?". He said that an organism is conscious "if and only if there is something that it is like to be that organism—something it is like for the organism"; and he argued that no matter how much we know about an animal's brain and behavior, we can never really put ourselves into the mind of the animal and experience its world in the way it does itself.[183] Other thinkers, such as Douglas Hofstadter, dismiss this argument as incoherent.[184] Several psychologists and ethologists have argued for the existence of animal consciousness by describing a range of behaviors that appear to show animals holding beliefs about things they cannot directly perceive—Donald Griffin's 2001 book Animal Minds reviews a substantial portion of the evidence.[138]

On July 7, 2012, eminent scientists from different branches of neuroscience gathered at the University of Cambridge to celebrate the Francis Crick Memorial Conference, which deals with consciousness in humans and pre-linguistic consciousness in nonhuman animals. After the conference, they signed in the presence of Stephen Hawking, the 'Cambridge Declaration on Consciousness', which summarizes the most important findings of the survey:

"We decided to reach a consensus and make a statement directed to the public that is not scientific. It's obvious to everyone in this room that animals have consciousness, but it is not obvious to the rest of the world. It is not obvious to the rest of the Western world or the Far East. It is not obvious to the society."[185]

"Convergent evidence indicates that non-human animals ..., including all mammals and birds, and other creatures, ... have the necessary neural substrates of consciousness and the capacity to exhibit intentional behaviors."[186]

The idea of an artifact made conscious is an ancient theme of mythology, appearing for example in the Greek myth of Pygmalion, who carved a statue that was magically brought to life, and in medieval Jewish stories of the Golem, a magically animated homunculus built of clay.[187] However, the possibility of actually constructing a conscious machine was probably first discussed by Ada Lovelace, in a set of notes written in 1842 about the Analytical Engine invented by Charles Babbage, a precursor (never built) to modern electronic computers. Lovelace was essentially dismissive of the idea that a machine such as the Analytical Engine could think in a humanlike way. She wrote:

It is desirable to guard against the possibility of exaggerated ideas that might arise as to the powers of the Analytical Engine. ... The Analytical Engine has no pretensions whatever to originate anything. It can do whatever we know how to order it to perform. It can follow analysis; but it has no power of anticipating any analytical relations or truths. Its province is to assist us in making available what we are already acquainted with.[188]

One of the most influential contributions to this question was an essay written in 1950 by pioneering computer scientist Alan Turing, titled Computing Machinery and Intelligence. Turing disavowed any interest in terminology, saying that even "Can machines think?" is too loaded with spurious connotations to be meaningful; but he proposed to replace all such questions with a specific operational test, which has become known as the Turing test.[189] To pass the test, a computer must be able to imitate a human well enough to fool interrogators. In his essay Turing discussed a variety of possible objections, and presented a counterargument to each of them. The Turing test is commonly cited in discussions of artificial intelligence as a proposed criterion for machine consciousness; it has provoked a great deal of philosophical debate. For example, Daniel Dennett and Douglas Hofstadter argue that anything capable of passing the Turing test is necessarily conscious,[190] while David Chalmers argues that a philosophical zombie could pass the test, yet fail to be conscious.[191] A third group of scholars have argued that with technological growth once machines begin to display any substantial signs of human-like behavior then the dichotomy (of human consciousness compared to human-like consciousness) becomes passé and issues of machine autonomy begin to prevail even as observed in its nascent form within contemporary industry and technology.[68][69] Jürgen Schmidhuber argues that consciousness is the result of compression.[192] As an agent sees representation of itself recurring in the environment, the compression of this representation can be called consciousness.

In a lively exchange over what has come to be referred to as "the Chinese room argument", John Searle sought to refute the claim of proponents of what he calls "strong artificial intelligence (AI)" that a computer program can be conscious, though he does agree with advocates of "weak AI" that computer programs can be formatted to "simulate" conscious states. His own view is that consciousness has subjective, first-person causal powers by being essentially intentional due to the way human brains function biologically; conscious persons can perform computations, but consciousness is not inherently computational the way computer programs are. To make a Turing machine that speaks Chinese, Searle imagines a room with one monolingual English speaker (Searle himself, in fact), a book that designates a combination of Chinese symbols to be output paired with Chinese symbol input, and boxes filled with Chinese symbols. In this case, the English speaker is acting as a computer and the rulebook as a program. Searle argues that with such a machine, he would be able to process the inputs to outputs perfectly without having any understanding of Chinese, nor having any idea what the questions and answers could possibly mean. If the experiment were done in English, since Searle knows English, he would be able to take questions and give answers without any algorithms for English questions, and he would be effectively aware of what was being said and the purposes it might serve. Searle would pass the Turing test of answering the questions in both languages, but he is only conscious of what he is doing when he speaks English. Another way of putting the argument is to say that computer programs can pass the Turing test for processing the syntax of a language, but that the syntax cannot lead to semantic meaning in the way strong AI advocates hoped.[193][194]

In the literature concerning artificial intelligence, Searle's essay has been second only to Turing's in the volume of debate it has generated.[195] Searle himself was vague about what extra ingredients it would take to make a machine conscious: all he proposed was that what was needed was "causal powers" of the sort that the brain has and that computers lack. But other thinkers sympathetic to his basic argument have suggested that the necessary (though perhaps still not sufficient) extra conditions may include the ability to pass not just the verbal version of the Turing test, but the robotic version,[196] which requires grounding the robot's words in the robot's sensorimotor capacity to categorize and interact with the things in the world that its words are about, Turing-indistinguishably from a real person. Turing-scale robotics is an empirical branch of research on embodied cognition and situated cognition.[197]

In 2014, Victor Argonov has suggested a non-Turing test for machine consciousness based on a machine's ability to produce philosophical judgments.[198] He argues that a deterministic machine must be regarded as conscious if it is able to produce judgments on all problematic properties of consciousness (such as qualia or binding) having no innate (preloaded) philosophical knowledge on these issues, no philosophical discussions while learning, and no informational models of other creatures in its memory (such models may implicitly or explicitly contain knowledge about these creatures' consciousness). However, this test can be used only to detect, but not refute the existence of consciousness. A positive result proves that a machine is conscious but a negative result proves nothing. For example, absence of philosophical judgments may be caused by lack of the machine's intellect, not by absence of consciousness.

William James is usually credited with popularizing the idea that human consciousness flows like a stream, in his Principles of Psychology of 1890.

According to James, the "stream of thought" is governed by five characteristics:[199]

A similar concept appears in Buddhist philosophy, expressed by the Sanskrit term Citta-saṃtāna, which is usually translated as mindstream or "mental continuum". Buddhist teachings describe that consciousness manifests moment to moment as sense impressions and mental phenomena that are continuously changing.[200] The teachings list six triggers that can result in the generation of different mental events.[200] These triggers are input from the five senses (seeing, hearing, smelling, tasting or touch sensations), or a thought (relating to the past, present or the future) that happen to arise in the mind. The mental events generated as a result of these triggers are: feelings, perceptions and intentions/behaviour. The moment-by-moment manifestation of the mind-stream is said to happen in every person all the time. It even happens in a scientist who analyzes various phenomena in the world, or analyzes the material body including the organ brain.[200] The manifestation of the mindstream is also described as being influenced by physical laws, biological laws, psychological laws, volitional laws, and universal laws.[200] The purpose of the Buddhist practice of mindfulness is to understand the inherent nature of the consciousness and its characteristics.[201]

In the West, the primary impact of the idea has been on literature rather than science: "stream of consciousness as a narrative mode" means writing in a way that attempts to portray the moment-to-moment thoughts and experiences of a character. This technique perhaps had its beginnings in the monologues of Shakespeare's plays and reached its fullest development in the novels of James Joyce and Virginia Woolf, although it has also been used by many other noted writers.[202]

Here, for example, is a passage from Joyce's Ulysses about the thoughts of Molly Bloom: