Semantic parsing

From Wikipedia, the free encyclopedia

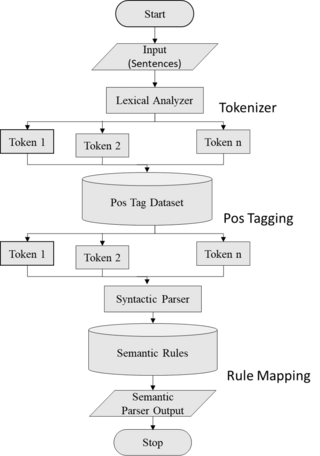

Semantic parsing is the task of converting a natural language utterance to a logical form: a machine-understandable representation of its meaning.[1] Semantic parsing can thus be understood as extracting the precise meaning of an utterance. Applications of semantic parsing include machine translation,[2] question answering,[1][3] ontology induction,[4] automated reasoning,[5] and code generation.[6][7] The phrase was first used in the 1970s by Yorick Wilks as the basis for machine translation programs working with only semantic representations.[8] Semantic parsing is one of the important tasks in computational linguistics and natural language processing.

Semantic parsing maps text to formal meaning representations. This contrasts with semantic role labeling and other forms of shallow semantic processing, which do not aim to produce complete formal meanings.[9] In computer vision, semantic parsing is a process of segmentation for 3D objects.[10][11]

History & Background

Early research of semantic parsing included the generation of grammar manually [12] as well as utilizing applied programming logic.[13] In the 2000s, most of the work in this area involved the creation/learning and use of different grammars and lexicons on controlled tasks,[14][15] particularly general grammars such as SCFGs.[16] This improved upon manual grammars primarily because they leveraged the syntactical nature of the sentence, but they still couldn’t cover enough variation and weren’t robust enough to be used in the real world. However, following the development of advanced neural network techniques, especially the Seq2Seq model,[17] and the availability of powerful computational resources, neural semantic parsing started emerging. Not only was it providing competitive results on the existing datasets, but it was robust to noise and did not require a lot of supervision and manual intervention. The current transition of traditional parsing to neural semantic parsing has not been perfect though. Neural semantic parsing, even with its advantages, still fails to solve the problem at a deeper level. Neural models like Seq2Seq treat the parsing problem as a sequential translation problem, and the model learns patterns in a black-box manner, which means we cannot really predict whether the model is truly solving the problem. Intermediate efforts and modifications to the Seq2Seq to incorporate syntax and semantic meaning have been attempted,[18][19] with a marked improvement in results, but there remains a lot of ambiguity to be taken care of.

Types

Summarize

Perspective

Shallow Semantic Parsing

Shallow semantic parsing is concerned with identifying entities in an utterance and labelling them with the roles they play. Shallow semantic parsing is sometimes known as slot-filling or frame semantic parsing, since its theoretical basis comes from frame semantics, wherein a word evokes a frame of related concepts and roles. Slot-filling systems are widely used in virtual assistants in conjunction with intent classifiers, which can be seen as mechanisms for identifying the frame evoked by an utterance.[20][21] Popular architectures for slot-filling are largely variants of an encoder-decoder model, wherein two recurrent neural networks (RNNs) are trained jointly to encode an utterance into a vector and to decode that vector into a sequence of slot labels.[22] This type of model is used in the Amazon Alexa spoken language understanding system.[20] This parsing follow an unsupervised learning techniques.

Deep Semantic Parsing

Deep semantic parsing, also known as compositional semantic parsing, is concerned with producing precise meaning representations of utterances that can contain significant compositionality.[23] Shallow semantic parsers can parse utterances like "show me flights from Boston to Dallas" by classifying the intent as "list flights", and filling slots "source" and "destination" with "Boston" and "Dallas", respectively. However, shallow semantic parsing cannot parse arbitrary compositional utterances, like "show me flights from Boston to anywhere that has flights to Juneau". Deep semantic parsing attempts to parse such utterances, typically by converting them to a formal meaning representation language. Nowadays, compositional semantic parsing are using Large Language Models to solve artificial compositional generalization tasks such as SCAN.[24]

Neural Semantic Parsing

Semantic parsers play a crucial role in natural language understanding systems because they transform natural language utterances into machine-executable logical structures or programmes. A well-established field of study, semantic parsing finds use in voice assistants, question answering, instruction following, and code generation. Since Neural approaches have been available for two years, many of the presumptions that underpinned semantic parsing have been rethought, leading to a substantial change in the models employed for semantic parsing. Though Semantic neural network and Neural Semantic Parsing [25] both deal with Natural Language Processing (NLP) and semantics, they are not same. The models and executable formalisms used in semantic parsing research have traditionally been strongly dependent on concepts from formal semantics in linguistics, like the λ-calculus produced by a CCG parser. Nonetheless, more approachable formalisms, like conventional programming languages, and NMT-style models that are considerably more accessible to a wider NLP audience, are made possible by recent work with neural encoder-decoder semantic parsers. We'll give a summary of contemporary neural approaches to semantic parsing and discuss how they've affected the field's understanding of semantic parsing.

Representation languages

Early semantic parsers used highly domain-specific meaning representation languages,[26] with later systems using more extensible languages like Prolog,[27] lambda calculus,[28] lambda dependency-based compositional semantics (λ-DCS),[29] SQL,[30][31] Python,[32] Java,[33] the Alexa Meaning Representation Language,[20] and the Abstract Meaning Representation (AMR). Some work has used more exotic meaning representations, like query graphs,[34] semantic graphs,[35] or vector representations.[36]

Models

Most modern deep semantic parsing models are either based on defining a formal grammar for a chart parser or utilizing RNNs to directly translate from a natural language to a meaning representation language. Examples of systems built on formal grammars are the Cornell Semantic Parsing Framework,[37] Stanford University's Semantic Parsing with Execution (SEMPRE),[3] and the Word Alignment-based Semantic Parser (WASP).[38]

Datasets

Summarize

Perspective

Datasets used for training statistical semantic parsing models are divided into two main classes based on application: those used for question answering via knowledge base queries, and those used for code generation.

Question answering

A standard dataset for question answering via semantic parsing is the Air Travel Information System (ATIS) dataset, which contains questions and commands about upcoming flights as well as corresponding SQL.[30] Another benchmark dataset is the GeoQuery dataset which contains questions about the geography of the U.S. paired with corresponding Prolog.[27] The Overnight dataset is used to test how well semantic parsers adapt across multiple domains; it contains natural language queries about 8 different domains paired with corresponding λ-DCS expressions.[39] Recently, semantic parsing is gaining significant popularity as a result of new research works and many large companies, namely Google, Microsoft, Amazon, etc. are working on this area. One on the recent works of Semantic Parsing for question answering is attached here.[40] Shown in this picture is a representation of an example conversation from SPICE. The left column shows dialogue turns (T1–T3) with user (U) and system (S) utterances. The middle column shows the annotations provided in CSQA. Blue boxes on the right show the sequence of actions (AS) and corresponding SPARQL semantic parses (SP).

Code generation

Popular datasets for code generation include two trading card datasets that link the text that appears on cards to code that precisely represents those cards. One was constructed linking Magic: The Gathering card texts to Java snippets; the other by linking Hearthstone card texts to Python snippets.[33] The IFTTT dataset[41] uses a specialized domain-specific language with short conditional commands. The Django dataset[42] pairs Python snippets with English and Japanese pseudocode describing them. The RoboCup dataset[43] pairs English rules with their representations in a domain-specific language that can be understood by virtual soccer-playing robots.

Application Areas

Summarize

Perspective

Within the field of natural language processing (NLP), semantic parsing deals with transforming human language into a format that is easier for machines to understand and comprehend. This method is useful in a number of contexts:

- Voice Assistants and Chatbots: Semantic parsing enhances the quality of user interaction in devices such as smart speakers and chatbots for customer service by comprehending and answering user inquiries in natural language.

- Information Retrieval: It improves the comprehension and processing of user queries by search engines and databases, resulting in more precise and pertinent search results.

- Machine Translation: To improve the quality and context of translation, machine translation entails comprehending the semantics of one language in order to translate it into another accurately.

- Text Analytics: Business intelligence and social media monitoring benefit from the meaningful insights that can be extracted from text data through semantic parsing. Examples of these insights include sentiment analysis, topic modelling, and trend analysis.

- Question Answering Systems: Found in systems such as IBM Watson, these systems assist in comprehending and analyzing natural language queries in order to deliver precise responses. They are particularly helpful in areas such as customer service and educational resources.

- Command and Control Systems: Semantic parsing aids in the accurate interpretation of voice or text commands used to control systems in applications such as software interfaces or smart homes.

- Content Categorization: It is a useful tool for online publishing and digital content management as it aids in the classification and organization of vast amounts of textual material by analyzing its semantic content.

- Technologies related to accessibility: Helps create tools for the disabled, such as sign language interpretation and text to speech conversion.

- Legal and Healthcare Informatics: Semantic parsing can extract and structure important information from legal documents and medical records to support research and decision-making.

Semantic parsing aims to improve various applications' efficiency and efficacy by bridging the gap between human language and machine processing in each of these domains.

Evaluation

The performance of Semantic parsers is also measured using standard evaluation metrics as like syntactic parsing. This can be evaluated for the ratio of exact matches (percentage of sentences that were perfectly parsed), and precision, recall, and F1-score calculated based on the correct constituency or dependency assignments in the parse relative to that number in reference and/or hypothesis parses. The latter are also known as the PARSEVAL metrics.[44]

See also

References

Wikiwand - on

Seamless Wikipedia browsing. On steroids.