Joint entropy

Measure of information in probability and information theory / From Wikipedia, the free encyclopedia

Dear Wikiwand AI, let's keep it short by simply answering these key questions:

Can you list the top facts and stats about Joint entropy?

Summarize this article for a 10 year old

SHOW ALL QUESTIONS

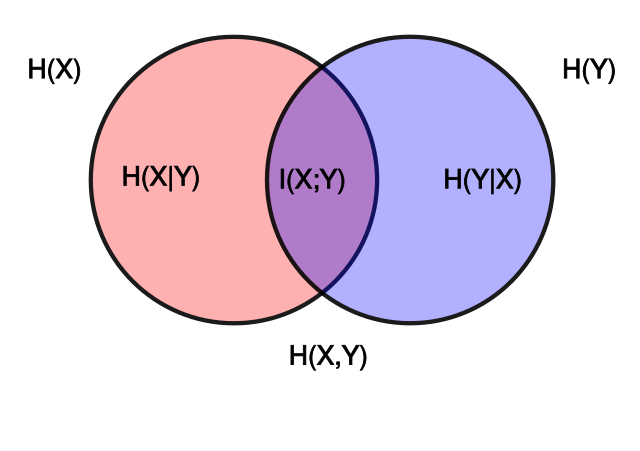

In information theory, joint entropy is a measure of the uncertainty associated with a set of variables.[2]