AlexNet

Influential 2012 convolutional neural network From Wikipedia, the free encyclopedia

AlexNet is a convolutional neural network (CNN) architecture, designed by Alex Krizhevsky in collaboration with Ilya Sutskever and Geoffrey Hinton, who was Krizhevsky's Ph.D. advisor at the University of Toronto in 2012. It had 60 million parameters and 650,000 neurons.[1]

| Developer(s) | Alex Krizhevsky, Ilya Sutskever, and Geoffrey Hinton |

|---|---|

| Initial release | Jun 28, 2011 |

| Repository | code |

| Written in | CUDA, C++ |

| Type | Convolutional neural network |

| License | New BSD License |

The original paper's primary result was that the depth of the model was essential for its high performance, which was computationally expensive, but made feasible due to the utilization of graphics processing units (GPUs) during training.[1]

The three formed team SuperVision and submitted AlexNet in the ImageNet Large Scale Visual Recognition Challenge on September 30, 2012.[2] The network achieved a top-5 error of 15.3%, more than 10.8 percentage points better than that of the runner-up.

The architecture influenced a large number of subsequent work in deep learning, especially in applying neural networks to computer vision.

Architecture

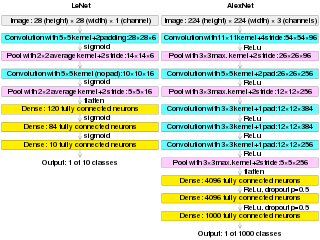

AlexNet contains eight layers: the first five are convolutional layers, some of them followed by max-pooling layers, and the last three are fully connected layers. The network, except the last layer, is split into two copies, each run on one GPU.[1] The entire structure can be written as

(CNN → RN → MP)² → (CNN³ → MP) → (FC → DO)² → Linear → softmax

where

- CNN = convolutional layer (with ReLU activation)

- RN = local response normalization

- MP = max-pooling

- FC = fully connected layer (with ReLU activation)

- Linear = fully connected layer (without activation)

- DO = dropout

It used the non-saturating ReLU activation function, which trained better than tanh and sigmoid.[1]

Because the network did not fit onto a single Nvidia GTX 580 3GB GPU, it was split into two halves, one on each GPU.[1]: Section 3.2

Training

Summarize

Perspective

The ImageNet training set contained 1.2 million images. The model was trained for 90 epochs over a period of five to six days using two Nvidia GTX 580 GPUs (3GB each).[1] These GPUs have a theoretical performance of 1.581 TFLOPS in float32 and were priced at US$500 upon release.[3] Each forward pass of AlexNet required approximately 1.43 GFLOPs.[4] Based on these values, the two GPUs together were theoretically capable of performing over 2,200 forward passes per second under ideal conditions.

AlexNet was trained with momentum gradient descent with a batch size of 128 examples, momentum of 0.9, and weight decay of 0.0005. Learning rate started at 10−2 and was manually decreased 10-fold whenever validation error appeared to stop decreasing. It was reduced three times during training, ending at 10−5.

It used two forms of data augmentation, both computed on the fly on the CPU, thus "computationally free":

- Extracting random 224×224 patches (and their horizontal reflections) from the original 256×256 images. This increases the size of the training set 2048-fold.

- Randomly shifting the RGB value of each image along the three principal directions of the RGB values of its pixels.

It used local response normalization, and dropout regularization with drop probability 0.5.

All weights were initialized as gaussians with 0 mean and 0.01 standard deviation. Biases in convolutional layers 2, 4, 5, and all fully-connected layers, were initialized to constant 1 to avoid the dying ReLU problem.

History

Summarize

Perspective

Previous work

(AlexNet image size should be 227×227×3, instead of 224×224×3, so the math will come out right. The original paper said different numbers, but Andrej Karpathy, the former head of computer vision at Tesla, said it should be 227×227×3 (he said Alex didn't describe why he put 224×224×3). The next convolution should be 11×11 with stride 4: 55×55×96 (instead of 54×54×96). It would be calculated, for example, as: [(input width 227 - kernel width 11) / stride 4] + 1 = [(227 - 11) / 4] + 1 = 55. Since the kernel output is the same length as width, its area is 55×55.)

AlexNet is a convolutional neural network. In 1980, Kunihiko Fukushima proposed an early CNN named neocognitron.[5][6] It was trained by an unsupervised learning algorithm. The LeNet-5 (Yann LeCun et al., 1989)[7][8] was trained by supervised learning with backpropagation algorithm, with an architecture that is essentially the same as AlexNet on a small scale.

Max pooling was used in 1990 for speech processing (essentially a 1-dimensional CNN),[9] and for image processing, was first used in the Cresceptron of 1992.[10]

During the 2000s, as GPU hardware improved, some researchers adapted these for general-purpose computing, including neural network training. (K. Chellapilla et al., 2006) trained a CNN on GPU that was 4 times faster than an equivalent CPU implementation.[11] (Raina et al 2009) trained a deep belief network with 100 million parameters on an Nvidia GeForce GTX 280 at up to 70 times speedup over CPUs.[12] A deep CNN of (Dan Cireșan et al., 2011) at IDSIA was 60 times faster than an equivalent CPU implementation.[13] Between May 15, 2011, and September 10, 2012, their CNN won four image competitions and achieved SOTA for multiple image databases.[14][15][16] According to the AlexNet paper,[1] Cireșan's earlier net is "somewhat similar." Both were written with CUDA to run on GPU.

Computer vision

During the 1990–2010 period, neural networks were not better than other machine learning methods like kernel regression, support vector machines, AdaBoost, structured estimation,[17] among others. For computer vision in particular, much progress came from manual feature engineering, such as SIFT features, SURF features, HoG features, bags of visual words, etc. It was a minority position in computer vision that features can be learned directly from data, a position which became dominant after AlexNet.[18]

In 2011, Geoffrey Hinton started reaching out to colleagues about "What do I have to do to convince you that neural networks are the future?", and Jitendra Malik, a sceptic of neural networks, recommended the PASCAL Visual Object Classes challenge. Hinton said its dataset was too small, so Malik recommended to him the ImageNet challenge.[19]

While AlexNet and LeNet share essentially the same design and algorithm, AlexNet is much larger than LeNet and was trained on a much larger dataset on much faster hardware. Over the period of 20 years, both data and compute became cheaply available.[18]

Subsequent work

AlexNet is highly influential, resulting in much subsequent work in using CNNs for computer vision and using GPUs to accelerate deep learning. As of early 2025, the AlexNet paper has been cited over 172,000 times according to Google Scholar.[20]

At the time of publication, there was no framework available for GPU-based neural network training and inference. The codebase for AlexNet was released under a BSD license, and had been commonly used in neural network research for several subsequent years.[21][18]

In one direction, subsequent works aimed to train increasingly deep CNNs that achieve increasingly higher performance on ImageNet. In this line of research are GoogLeNet (2014), VGGNet (2014), Highway network (2015), and ResNet (2015). Another direction aimed to reproduce the performance of AlexNet at a lower cost. In this line of research are SqueezeNet (2016), MobileNet (2017), EfficientNet (2019).

Geoffrey Hinton, Ilya Sutskever, and Alex Krizhevsky formed DNNResearch soon afterwards and sold the company, and the AlexNet source code along with it, to Google. The source code for AlexNet had been released under BSD-2 license via Computer History Museum.[22]

References

Wikiwand - on

Seamless Wikipedia browsing. On steroids.