Computer-generated imagery

Application of computer graphics to create or contribute to images From Wikipedia, the free encyclopedia

Computer-generated imagery (CGI) is a specific-technology or application of computer graphics for creating or improving images in art, printed media, simulators, videos and video games. These images are either static (i.e. still images) or dynamic (i.e. moving images). CGI both refers to 2D computer graphics and (more frequently) 3D computer graphics with the purpose of designing characters, virtual worlds, or scenes and special effects (in films, television programs, commercials, etc.). The application of CGI for creating/improving animations is called computer animation, or CGI animation.

History

Summarize

Perspective

The first feature film to use CGI as well as the composition of live-action film with CGI was Vertigo,[1] which used abstract computer graphics by John Whitney in the opening credits of the film. The first feature film to make use of CGI with live action in the storyline of the film was the 1973 film Westworld.[2] Other early films that incorporated CGI include Star Wars: Episode IV (1977),[2] Tron (1982), Star Trek II: The Wrath of Khan (1982),[2] Golgo 13: The Professional (1983),[3] The Last Starfighter (1984),[4] Young Sherlock Holmes (1985), The Abyss (1989), Terminator 2: Judgement Day (1991), Jurassic Park (1993) and Toy Story (1995). The first music video to use CGI was Will Powers' Adventures in Success (1983).[5] Prior to CGI being prevalent in film, virtual reality, personal computing and gaming, one of the early practical applications of CGI was for aviation and military training, namely the flight simulator. Visual systems developed in flight simulators were also an important precursor to three dimensional computer graphics and Computer Generated Imagery (CGI) systems today. Namely because the object of flight simulation was to reproduce on the ground the behavior of an aircraft in flight. Much of this reproduction had to do with believable visual synthesis that mimicked reality.[6] The Link Digital Image Generator (DIG) by the Singer Company (Singer-Link), was considered one of the world's first generation CGI systems.[7] It was a real-time, 3D capable, day/dusk/night system that was used by NASA shuttles, for F-111s, Black Hawk and the B-52. Link's Digital Image Generator had architecture to provide a visual system that realistically corresponded with the view of the pilot.[8] The basic architecture of the DIG and subsequent improvements contained a scene manager followed by geometric processor, video processor and into the display with the end goal of a visual system that processed realistic texture, shading, translucency capabilties, and free of aliasing.[9]

Combined with the need to pair virtual synthesis with military level training requirements, CGI technologies applied in flight simulation were often years ahead of what would have been available in commercial computing or even in high budget film. Early CGI systems could depict only objects consisting of planar polygons. Advances in algorithms and electronics in flight simulator visual systems and CGI in the 1970s and 1980s influenced many technologies still used in modern CGI adding the ability to superimpose texture over the surfaces as well as transition imagery from one level of detail to the next one in a smooth manner.[10]

The evolution of CGI led to the emergence of virtual cinematography in the 1990s, where the vision of the simulated camera is not constrained by the laws of physics. Availability of CGI software and increased computer speeds have allowed individual artists and small companies to produce professional-grade films, games, and fine art from their home computers.

Static images and landscapes

Summarize

Perspective

Not only do animated images form part of computer-generated imagery; natural looking landscapes (such as fractal landscapes) are also generated via computer algorithms. A simple way to generate fractal surfaces is to use an extension of the triangular mesh method, relying on the construction of some special case of a de Rham curve, e.g., midpoint displacement.[11] For instance, the algorithm may start with a large triangle, then recursively zoom in by dividing it into four smaller Sierpinski triangles, then interpolate the height of each point from its nearest neighbors.[11] The creation of a Brownian surface may be achieved not only by adding noise as new nodes are created but by adding additional noise at multiple levels of the mesh.[11] Thus a topographical map with varying levels of height can be created using relatively straightforward fractal algorithms. Some typical, easy-to-program fractals used in CGI are the plasma fractal and the more dramatic fault fractal.[12]

Many specific techniques have been researched and developed to produce highly focused computer-generated effects — e.g., the use of specific models to represent the chemical weathering of stones to model erosion and produce an "aged appearance" for a given stone-based surface.[13]

Architectural scenes

Summarize

Perspective

Modern architects use services from computer graphic firms to create 3-dimensional models for both customers and builders. These computer generated models can be more accurate than traditional drawings. Architectural animation (which provides animated movies of buildings, rather than interactive images) can also be used to see the possible relationship a building will have in relation to the environment and its surrounding buildings. The processing of architectural spaces without the use of paper and pencil tools is now a widely accepted practice with a number of computer-assisted architectural design systems.[14]

Architectural modeling tools allow an architect to visualize a space and perform "walk-throughs" in an interactive manner, thus providing "interactive environments" both at the urban and building levels.[15] Specific applications in architecture not only include the specification of building structures (such as walls and windows) and walk-throughs but the effects of light and how sunlight will affect a specific design at different times of the day.[16][17]

Architectural modeling tools have now become increasingly internet-based. However, the quality of internet-based systems still lags behind sophisticated in-house modeling systems.[18]

In some applications, computer-generated images are used to "reverse engineer" historical buildings. For instance, a computer-generated reconstruction of the monastery at Georgenthal in Germany was derived from the ruins of the monastery, yet provides the viewer with a "look and feel" of what the building would have looked like in its day.[19]

Anatomical models

Summarize

Perspective

Computer generated models used in skeletal animation are not always anatomically correct. However, organizations such as the Scientific Computing and Imaging Institute have developed anatomically correct computer-based models. Computer generated anatomical models can be used both for instructional and operational purposes. To date, a large body of artist produced medical images continue to be used by medical students, such as images by Frank H. Netter, e.g. Cardiac images. However, a number of online anatomical models are becoming available.

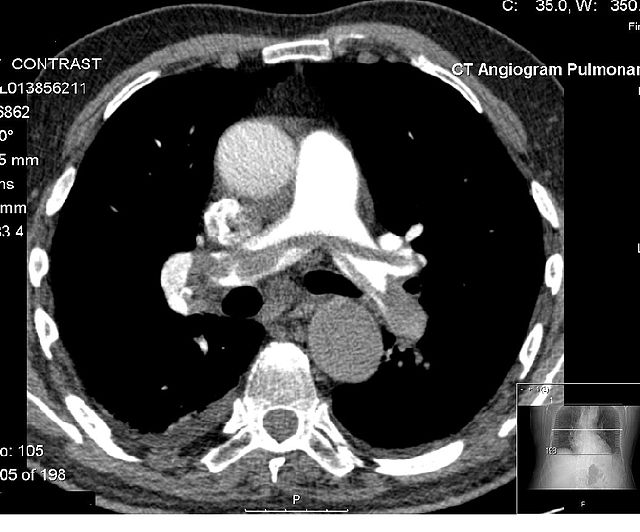

A single patient X-ray is not a computer generated image, even if digitized. However, in applications which involve CT scans a three-dimensional model is automatically produced from many single-slice x-rays, producing "computer generated image". Applications involving magnetic resonance imaging also bring together a number of "snapshots" (in this case via magnetic pulses) to produce a composite, internal image.

In modern medical applications, patient-specific models are constructed in 'computer assisted surgery'. For instance, in total knee replacement, the construction of a detailed patient-specific model can be used to carefully plan the surgery.[20] These three-dimensional models are usually extracted from multiple CT scans of the appropriate parts of the patient's own anatomy. Such models can also be used for planning aortic valve implantations, one of the common procedures for treating heart disease. Given that the shape, diameter, and position of the coronary openings can vary greatly from patient to patient, the extraction (from CT scans) of a model that closely resembles a patient's valve anatomy can be highly beneficial in planning the procedure.[21]

Cloth and skin images

Models of cloth generally fall into three groups:

- The geometric-mechanical structure at yarn crossing

- The mechanics of continuous elastic sheets

- The geometric macroscopic features of cloth.[22]

To date, making the clothing of a digital character automatically fold in a natural way remains a challenge for many animators.[23]

In addition to their use in film, advertising and other modes of public display, computer generated images of clothing are now routinely used by top fashion design firms.[24]

The challenge in rendering human skin images involves three levels of realism:

- Photo realism in resembling real skin at the static level

- Physical realism in resembling its movements

- Function realism in resembling its response to actions.[25]

The finest visible features such as fine wrinkles and skin pores are the size of about 100 μm or 0.1 millimetres. Skin can be modeled as a 7-dimensional bidirectional texture function (BTF) or a collection of bidirectional scattering distribution function (BSDF) over the target's surfaces.

Interactive simulation and visualization

Summarize

Perspective

Interactive visualization is the rendering of data that may vary dynamically and allowing a user to view the data from multiple perspectives. The applications areas may vary significantly, ranging from the visualization of the flow patterns in fluid dynamics to specific computer aided design applications.[26] The data rendered may correspond to specific visual scenes that change as the user interacts with the system — e.g. simulators, such as flight simulators, make extensive use of CGI techniques for representing the world.[27]

At the abstract level, an interactive visualization process involves a "data pipeline" in which the raw data is managed and filtered to a form that makes it suitable for rendering. This is often called the "visualization data". The visualization data is then mapped to a "visualization representation" that can be fed to a rendering system. This is usually called a "renderable representation". This representation is then rendered as a displayable image.[27] As the user interacts with the system (e.g. by using joystick controls to change their position within the virtual world) the raw data is fed through the pipeline to create a new rendered image, often making real-time computational efficiency a key consideration in such applications.[27][28]

Computer animation

Summarize

Perspective

While computer-generated images of landscapes may be static, computer animation only applies to dynamic images that resemble a movie. However, in general, the term computer animation refers to dynamic images that do not allow user interaction, and the term virtual world is used for the interactive animated environments.

Computer animation is essentially a digital successor to the art of stop motion animation of 3D models and frame-by-frame animation of 2D illustrations. Computer generated animations are more controllable than other more physically based processes, such as constructing miniatures for effects shots or hiring extras for crowd scenes, and because it allows the creation of images that would not be feasible using any other technology. It can also allow a single graphic artist to produce such content without the use of actors, expensive set pieces, or props.

To create the illusion of movement, an image is displayed on the computer screen and repeatedly replaced by a new image which is similar to the previous image, but advanced slightly in the time domain (usually at a rate of 24 or 30 frames/second). This technique is identical to how the illusion of movement is achieved with television and motion pictures.

Text-to-image models

an astronaut riding a horse, by Hiroshige, generated by Stable Diffusion 3.5, a large-scale text-to-image model first released in 2022A text-to-image model is a machine learning model which takes an input natural language description and produces an image matching that description.

Text-to-image models began to be developed in the mid-2010s during the beginnings of the AI boom, as a result of advances in deep neural networks. In 2022, the output of state-of-the-art text-to-image models—such as OpenAI's DALL-E 2, Google Brain's Imagen, Stability AI's Stable Diffusion, and Midjourney—began to be considered to approach the quality of real photographs and human-drawn art.

Text-to-image models are generally latent diffusion models, which combine a language model, which transforms the input text into a latent representation, and a generative image model, which produces an image conditioned on that representation. The most effective models have generally been trained on massive amounts of image and text data scraped from the web.[29]Virtual worlds

A virtual world is an agent-based and simulated environment allowing users to interact with artificially animated characters (e.g software agent) or with other physical users, through the use of avatars. Virtual worlds are intended for its users to inhabit and interact, and the term today has become largely synonymous with interactive 3D virtual environments, where the users take the form of avatars visible to others graphically.[30] These avatars are usually depicted as textual, two-dimensional, or three-dimensional graphical representations, although other forms are possible[31] (auditory[32] and touch sensations for example). Some, but not all, virtual worlds allow for multiple users.

In courtrooms

Computer-generated imagery has been used in courtrooms, primarily since the early 2000s. However, some experts have argued that it is prejudicial. They are used to help judges or the jury to better visualize the sequence of events, evidence or hypothesis.[33] However, a 1997 study showed that people are poor intuitive physicists and easily influenced by computer generated images.[34] Thus it is important that jurors and other legal decision-makers be made aware that such exhibits are merely a representation of one potential sequence of events.

Broadcast and live events

Summarize

Perspective

Weather visualizations were the first application of CGI in television. One of the first companies to offer computer systems for generating weather graphics was ColorGraphics Weather Systems in 1979 with the "LiveLine", based around an Apple II computer, with later models from ColorGraphics using Cromemco computers fitted with their Dazzler video graphics card.

It has now become common in weather casting to display full motion video of images captured in real-time from multiple cameras and other imaging devices. Coupled with 3D graphics symbols and mapped to a common virtual geospatial model, these animated visualizations constitute the first true application of CGI to TV.

CGI has become common in sports telecasting. Sports and entertainment venues are provided with see-through and overlay content through tracked camera feeds for enhanced viewing by the audience. Examples include the yellow "first down" line seen in television broadcasts of American football games showing the line the offensive team must cross to receive a first down. CGI is also used in association with football and other sporting events to show commercial advertisements overlaid onto the view of the playing area. Sections of rugby fields and cricket pitches also display sponsored images. Swimming telecasts often add a line across the lanes to indicate the position of the current record holder as a race proceeds to allow viewers to compare the current race to the best performance. Other examples include hockey puck tracking and annotations of racing car performance[35] and snooker ball trajectories.[36][37] Sometimes CGI on TV with correct alignment to the real world has been referred to as augmented reality.

Motion capture

Summarize

Perspective

Computer-generated imagery is often used in conjunction with motion capture to better cover the faults that come with CGI and animation. Computer-generated imagery is limited in its practical application by how realistic it can look. Unrealistic, or badly managed computer-generated imagery can result in the uncanny valley effect.[38] This effect refers to the human ability to recognize things that look eerily like humans, but are slightly off. Such ability is a fault with normal computer-generated imagery which, due to the complex anatomy of the human body, can often fail to replicate it perfectly. Artists can use motion capture to get footage of a human performing an action and then replicate it perfectly with computer-generated imagery so that it looks normal.

The lack of anatomically correct digital models contributes to the necessity of motion capture as it is used with computer-generated imagery. Because computer-generated imagery reflects only the outside, or skin, of the object being rendered, it fails to capture the infinitesimally small interactions between interlocking muscle groups used in fine motor skills like speaking. The constant motion of the face as it makes sounds with shaped lips and tongue movement, along with the facial expressions that go along with speaking are difficult to replicate by hand.[39] Motion capture can catch the underlying movement of facial muscles and better replicate the visual that goes along with the audio.

See also

- 3D modeling

- Cinema Research Corporation

- Cel shading

- Anime Studio

- Animation database

- List of computer-animated films

- Digital image

- Parallel rendering

- Photoshop is the industry standard commercial digital photo editing tool.

- GIMP, a FOSS digital photo editing application.

- Poser DIY CGI optimized for soft models

- Random number generation

- Ray tracing (graphics)

- Real-time computer graphics

- Shader

- Virtual human

- Virtual studio

- Virtual Physiological Human

References

External links

Wikiwand - on

Seamless Wikipedia browsing. On steroids.