Zipf's law

Probability distribution From Wikipedia, the free encyclopedia

Zipf's law (/zɪf/; German pronunciation: [tsɪpf]) is an empirical law stating that when a list of measured values is sorted in decreasing order, the value of the n-th entry is often approximately inversely proportional to n.

The best known instance of Zipf's law applies to the frequency table of words in a text or corpus of natural language:

It is usually found that the most common word occurs approximately twice as often as the next common one, three times as often as the third most common, and so on. For example, in the Brown Corpus of American English text, the word "the" is the most frequently occurring word, and by itself accounts for nearly 7% of all word occurrences (69,971 out of slightly over 1 million). True to Zipf's law, the second-place word "of" accounts for slightly over 3.5% of words (36,411 occurrences), followed by "and" (28,852).[2] It is often used in the following form, called Zipf-Mandelbrot law:

where and are fitted parameters, with , and .[1]

This law is named after the American linguist George Kingsley Zipf,[3][4][5] and is still an important concept in quantitative linguistics. It has been found to apply to many other types of data studied in the physical and social sciences.

In mathematical statistics, the concept has been formalized as the Zipfian distribution: A family of related discrete probability distributions whose rank-frequency distribution is an inverse power law relation. They are related to Benford's law and the Pareto distribution.

Some sets of time-dependent empirical data deviate somewhat from Zipf's law. Such empirical distributions are said to be quasi-Zipfian.

History

Summarize

Perspective

In 1913, the German physicist Felix Auerbach observed an inverse proportionality between the population sizes of cities, and their ranks when sorted by decreasing order of that variable.[6]

Zipf's law had been discovered before Zipf,[a] first by the French stenographer Jean-Baptiste Estoup in 1916,[8][7] and also by G. Dewey in 1923,[9] and by E. Condon in 1928.[10]

The same relation for frequencies of words in natural language texts was observed by George Zipf in 1932,[4] but he never claimed to have originated it. In fact, Zipf did not like mathematics. In his 1932 publication,[11] the author speaks with disdain about mathematical involvement in linguistics, a.o. ibidem, p. 21:

- ... let me say here for the sake of any mathematician who may plan to formulate the ensuing data more exactly, the ability of the highly intense positive to become the highly intense negative, in my opinion, introduces the devil into the formula in the form of

The only mathematical expression Zipf used looks like ab2 = constant, which he "borrowed" from Alfred J. Lotka's 1926 publication.[12]

The same relationship was found to occur in many other contexts, and for other variables besides frequency.[1] For example, when corporations are ranked by decreasing size, their sizes are found to be inversely proportional to the rank.[13] The same relation is found for personal incomes (where it is called Pareto principle[14]), number of people watching the same TV channel,[15] notes in music,[16] cells transcriptomes,[17][18] and more.

In 1992 bioinformatician Wentian Li published a short paper[19] showing that Zipf's law emerges even in randomly generated texts. It included proof that the power law form of Zipf's law was a byproduct of ordering words by rank.

Formal definition

Summarize

Perspective

|

Probability mass function  Plot of the Zipf PMF for N = 10. Zipf PMF for N = 10 on a log–log scale. The horizontal axis is the index k . (The function is only defined at integer values of k . The connecting lines are only visual guides; they do not indicate continuity.) | |||

|

Cumulative distribution function  Plot of the Zipf CDF for N = 10. Zipf CDF for N = 10 . The horizontal axis is the index k . (The function is only defined at integer values of k . The connecting lines do not indicate continuity.) | |||

| Parameters | |||

|---|---|---|---|

| Support | |||

| PMF | where HN,s is the Nth generalized harmonic number | ||

| CDF | |||

| Mean | |||

| Mode | |||

| Variance | |||

| Entropy | |||

| MGF | |||

| CF | |||

Formally, the Zipf distribution on N elements assigns to the element of rank k (counting from 1) the probability:

where HN is a normalization constant: The Nth harmonic number:

The distribution is sometimes generalized to an inverse power law with exponent s instead of 1 .[20] Namely,

where HN,s is a generalized harmonic number

The generalized Zipf distribution can be extended to infinitely many items (N = ∞) only if the exponent s exceeds 1 . In that case, the normalization constant HN,s becomes Riemann's zeta function,

The infinite item case is characterized by the Zeta distribution and is called Lotka's law. If the exponent s is 1 or less, the normalization constant HN,s diverges as N tends to infinity.

Empirical testing

Empirically, a data set can be tested to see whether Zipf's law applies by checking the goodness of fit of an empirical distribution to the hypothesized power law distribution with a Kolmogorov–Smirnov test, and then comparing the (log) likelihood ratio of the power law distribution to alternative distributions like an exponential distribution or lognormal distribution.[21]

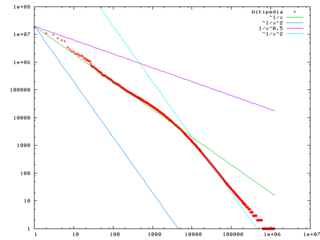

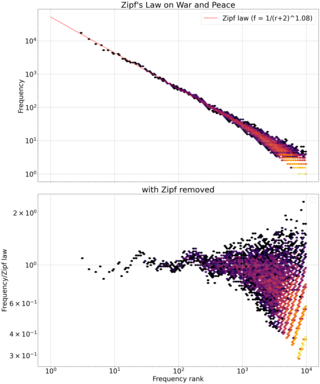

Zipf's law can be visualized by plotting the item frequency data on a log-log graph, with the axes being the logarithm of rank order, and logarithm of frequency. The data conform to Zipf's law with exponent s to the extent that the plot approximates a linear (more precisely, affine) function with slope −s. For exponent s = 1 , one can also plot the reciprocal of the frequency (mean interword interval) against rank, or the reciprocal of rank against frequency, and compare the result with the line through the origin with slope 1 .[3]

Statistical explanations

Summarize

Perspective

Although Zipf's Law holds for most natural languages, and even certain artificial ones such as Esperanto[22] and Toki Pona,[23] the reason is still not well understood.[24] Recent reviews of generative processes for Zipf's law include Mitzenmacher, "A Brief History of Generative Models for Power Law and Lognormal Distributions",[25] and Simkin, "Re-inventing Willis".[26]

However, it may be partly explained by statistical analysis of randomly generated texts. Wentian Li has shown that in a document in which each character has been chosen randomly from a uniform distribution of all letters (plus a space character), the "words" with different lengths follow the macro-trend of Zipf's law (the more probable words are the shortest and have equal probability).[19] In 1959, Vitold Belevitch observed that if any of a large class of well-behaved statistical distributions (not only the normal distribution) is expressed in terms of rank and expanded into a Taylor series, the first-order truncation of the series results in Zipf's law. Further, a second-order truncation of the Taylor series resulted in Mandelbrot's law.[27][28]

The principle of least effort is another possible explanation: Zipf himself proposed that neither speakers nor hearers using a given language wants to work any harder than necessary to reach understanding, and the process that results in approximately equal distribution of effort leads to the observed Zipf distribution.[5][29]

A minimal explanation assumes that words are generated by monkeys typing randomly. If language is generated by a single monkey typing randomly, with fixed and nonzero probability of hitting each letter key or white space, then the words (letter strings separated by white spaces) produced by the monkey follows Zipf's law.[30]

Another possible cause for the Zipf distribution is a preferential attachment process, in which the value x of an item tends to grow at a rate proportional to x (intuitively, "the rich get richer" or "success breeds success"). Such a growth process results in the Yule–Simon distribution, which has been shown to fit word frequency versus rank in language[31] and population versus city rank[32] better than Zipf's law. It was originally derived to explain population versus rank in species by Yule, and applied to cities by Simon.

A similar explanation is based on atlas models, systems of exchangeable positive-valued diffusion processes with drift and variance parameters that depend only on the rank of the process. It has been shown mathematically that Zipf's law holds for Atlas models that satisfy certain natural regularity conditions.[33][34]

Related laws

Summarize

Perspective

A generalization of Zipf's law is the Zipf–Mandelbrot law, proposed by Benoit Mandelbrot, whose frequencies are:

The constant C is the Hurwitz zeta function evaluated at s.

Zipfian distributions can be obtained from Pareto distributions by an exchange of variables.[20]

The Zipf distribution is sometimes called the discrete Pareto distribution[35] because it is analogous to the continuous Pareto distribution in the same way that the discrete uniform distribution is analogous to the continuous uniform distribution.

The tail frequencies of the Yule–Simon distribution are approximately

for any choice of ρ > 0 .

In the parabolic fractal distribution, the logarithm of the frequency is a quadratic polynomial of the logarithm of the rank. This can markedly improve the fit over a simple power-law relationship.[36] Like fractal dimension, it is possible to calculate Zipf dimension, which is a useful parameter in the analysis of texts.[37]

It has been argued that Benford's law is a special bounded case of Zipf's law,[36] with the connection between these two laws being explained by their both originating from scale invariant functional relations from statistical physics and critical phenomena.[38] The ratios of probabilities in Benford's law are not constant. The leading digits of data satisfying Zipf's law with s = 1 , satisfy Benford's law.

| Benford's law: |

||

|---|---|---|

| 1 | 0.30103000 | |

| 2 | 0.17609126 | −0.7735840 |

| 3 | 0.12493874 | −0.8463832 |

| 4 | 0.09691001 | −0.8830605 |

| 5 | 0.07918125 | −0.9054412 |

| 6 | 0.06694679 | −0.9205788 |

| 7 | 0.05799195 | −0.9315169 |

| 8 | 0.05115252 | −0.9397966 |

| 9 | 0.04575749 | −0.9462848 |

Occurrences

Summarize

Perspective

City sizes

Following Auerbach's 1913 observation, there has been substantial examination of Zipf's law for city sizes.[39] However, more recent empirical[40][41] and theoretical[42] studies have challenged the relevance of Zipf's law for cities.

Word frequencies in natural languages

In many texts in human languages, word frequencies approximately follow a Zipf distribution with exponent s close to 1; that is, the most common word occurs about n times the n-th most common one.

The actual rank-frequency plot of a natural language text deviates in some extent from the ideal Zipf distribution, especially at the two ends of the range. The deviations may depend on the language, on the topic of the text, on the author, on whether the text was translated from another language, and on the spelling rules used.[citation needed] Some deviation is inevitable because of sampling error.

At the low-frequency end, where the rank approaches N, the plot takes a staircase shape, because each word can occur only an integer number of times.

- Zipf's law plots for several languages

- First five books of the Old Testament (the Torah) in Hebrew, with vowels

In some Romance languages, the frequencies of the dozen or so most frequent words deviate significantly from the ideal Zipf distribution, because of those words include articles inflected for grammatical gender and number.[citation needed]

In many East Asian languages, such as Chinese, Tibetan, and Vietnamese, each morpheme (word or word piece) consists of a single syllable; a word of English being often translated to a compound of two such syllables. The rank-frequency table for those morphemes deviates significantly from the ideal Zipf law, at both ends of the range.[citation needed]

Even in English, the deviations from the ideal Zipf's law become more apparent as one examines large collections of texts. Analysis of a corpus of 30,000 English texts showed that only about 15% of the texts in it have a good fit to Zipf's law. Slight changes in the definition of Zipf's law can increase this percentage up to close to 50%.[45]

In these cases, the observed frequency-rank relation can be modeled more accurately as by separate Zipf–Mandelbrot laws distributions for different subsets or subtypes of words. This is the case for the frequency-rank plot of the first 10 million words of the English Wikipedia. In particular, the frequencies of the closed class of function words in English is better described with s lower than 1, while open-ended vocabulary growth with document size and corpus size require s greater than 1 for convergence of the Generalized Harmonic Series.[3]

When a text is encrypted in such a way that every occurrence of each distinct plaintext word is always mapped to the same encrypted word (as in the case of simple substitution ciphers, like the Caesar ciphers, or simple codebook ciphers), the frequency-rank distribution is not affected. On the other hand, if separate occurrences of the same word may be mapped to two or more different words (as happens with the Vigenère cipher), the Zipf distribution will typically have a flat part at the high-frequency end.[citation needed]

Applications

Zipf's law has been used for extraction of parallel fragments of texts out of comparable corpora.[46] Laurance Doyle and others have suggested the application of Zipf's law for detection of alien language in the search for extraterrestrial intelligence.[47][48]

The frequency-rank word distribution is often characteristic of the author and changes little over time. This feature has been used in the analysis of texts for authorship attribution.[49][50]

The word-like sign groups of the 15th-century codex Voynich Manuscript have been found to satisfy Zipf's law, suggesting that text is most likely not a hoax but rather written in an obscure language or cipher.[51][52]

Whale Communication

Recent analysis of whale vocalization samples shows they contain recurring phonemes whose distribution appears to closely obey Zipf's Law.[53] While this isn't proof that whale communication is a natural language, it is an intriguing discovery.

See also

- 1% rule (Internet culture) – Hypothesis that more people will lurk in a virtual community than will participate

- Benford's law – Observation that in many real-life datasets, the leading digit is likely to be small

- Bradford's law – Pattern of references in science journals

- Brevity law – Linguistics law

- Demographic gravitation – Social effect

- Frequency list – Bare list of a language's words in corpus linguistics

- Gibrat's law – Economic principle

- Hapax legomenon – Word appearing only once in a text or record

- Heaps' law – Heuristic for distinct words in a document

- King effect – Phenomenon in statistics where highest-ranked data points are outliers

- Long tail – Feature of some statistical distributions

- Lorenz curve – Graphical representation of the distribution of income or of wealth

- Lotka's law – An application of Zipf's law describing the frequency of publication by authors in any given field

- Menzerath's law – Linguistic law

- Pareto distribution – Probability distribution

- Pareto principle – Statistical principle about ratio of effects to causes

- Price's law

- Principle of least effort – Idea that agents prefer to do what's easiest

- Rank-size distribution – distribution of size by rank

- Stigler's law of eponymy – Observation that no scientific discovery is named after its discoverer

- Letter frequency

- Most common words in English

Notes

- as Zipf acknowledged[5]: 546

References

Further reading

External links

Wikiwand - on

Seamless Wikipedia browsing. On steroids.

![{\displaystyle f(k;\rho )\approx {\frac {\ [{\mathsf {constant}}]\ }{k^{(\rho +1)}}}}](http://wikimedia.org/api/rest_v1/media/math/render/svg/1180bbcf7154a86bae8797758c7e7261afa7c447)

, Benford's law: ...

, Benford's law: ...